Why JavaScript Bundles Continue to Leak Undiscovered Secrets

The Silent Front-End Threat You Must Stop Explained Simply

Table of Contents

- Executive Summary: The Hidden Epidemic

- How It Happens: From Code to Client-Side Exposure

- Real-World Impact & Case Studies

- The MITRE ATT&CK Connection

- Why Existing Detection Methods Fail

- Step-by-Step: Finding Your Own Bundle Secrets

- Red Team vs. Blue Team: Attack and Defense Perspectives

- Common Mistakes & Best Practices

- Implementation Framework: A 5-Pillar Defense Strategy

- Frequently Asked Questions (FAQ)

- Key Takeaways & Call to Action

Executive Summary: The Hidden Epidemic

Imagine building a secure fortress with a massive steel door, bulletproof windows, and armed guards, but then writing the access codes on the outside wall in paint that only some people can see. This is the paradox of modern web application security, where sensitive secrets like API keys, database credentials, and access tokens are being inadvertently baked into the public-facing JavaScript bundle secrets that power single-page applications (SPAs).

Recent research scanning 5 million applications revealed a staggering 42,000+ exposed tokens across 334 different secret types. These leaks represent a critical security gap that traditional scanners are missing, creating a low-effort, high-reward entry point for attackers. Understanding and mitigating this risk is not optional; it's a fundamental requirement for securing today's web-first digital assets.

This guide will demystify how JavaScript bundle secrets end up in production, why current tools fail to catch them, and provide a clear, actionable framework for both identifying and preventing this pervasive threat.

How It Happens: From Code to Client-Side Exposure

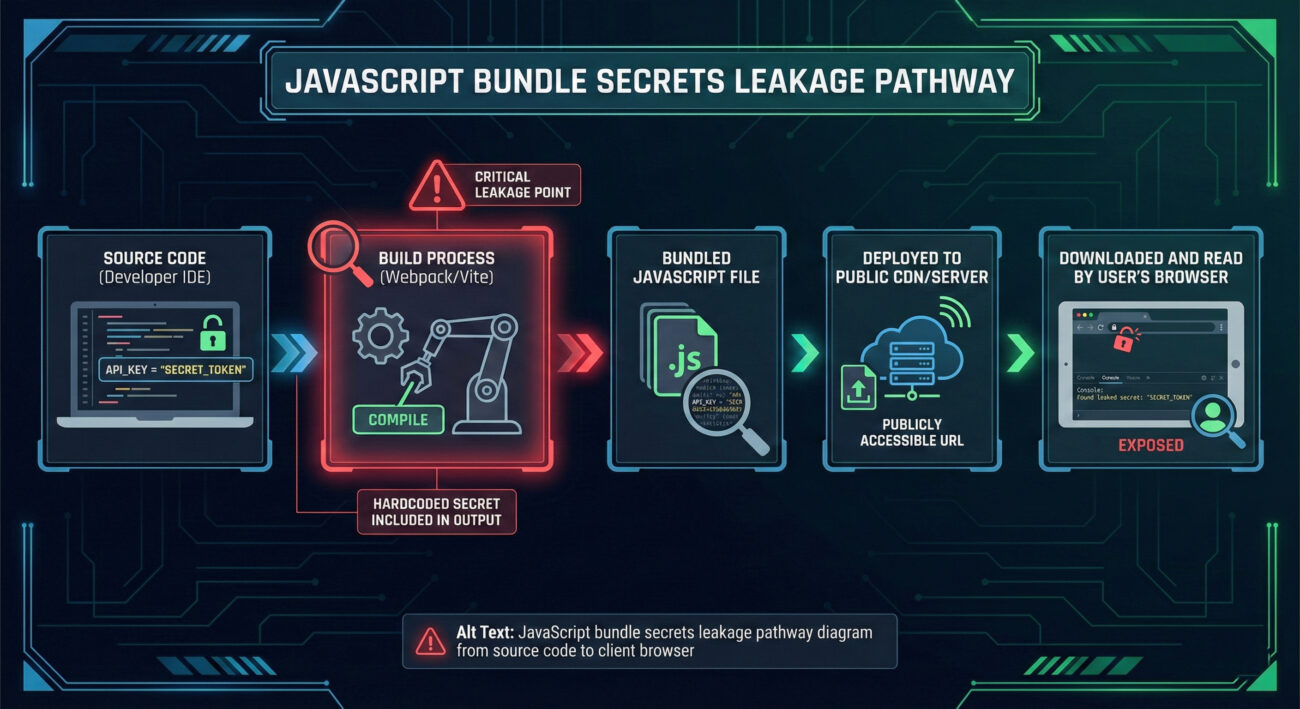

The path of a secret from a secure backend to a public-facing browser is often unintentional and stems from modern development practices. JavaScript bundle secrets don't appear by magic; they are the result of specific flaws in the development and deployment pipeline.

The Technical Root Cause: Build-Time Inclusion

Modern front-end applications use bundlers like Webpack, Vite, or Parcel. These tools take your source code, dependencies, and assets and package them into optimized "bundles" (like `app.abc123.js`) for the browser. The vulnerability occurs when these bundlers are configured to inline environment variables or when secrets are hardcoded in source files intended only for the build process.

Consider this flawed code pattern that leads directly to exposure:

// config.js - A file that is processed by the bundler

// ⚠️ This value will be baked into the final bundle if the bundler is not configured to replace it!

const API_KEY = process.env.MY_API_KEY || 'glpat-myActualGitLabTokenHere12345';

// apiService.js

export function fetchData() {

const response = await fetch('https://api.service.com/data', {

headers: {

'Authorization': `Bearer ${API_KEY}` // The secret is referenced here

}

});

return response.json();

}

If the environment variable `MY_API_KEY` is not defined at build time, the bundler uses the fallback hardcoded string. Even worse, some bundling configurations might embed the actual value from the developer's `.env` file directly into the bundle for "convenience," catastrophically exposing it to anyone who views the page source.

The Deployment Pipeline Gap

So-called "shift-left" security tools like Static Application Security Testing (SAST) and pre-commit hooks scan source code before it's merged. However, secrets can be introduced after these checks run, during the build or deployment stage.

- Build Script Secrets: A CI/CD pipeline script might fetch a token and pass it to the bundler as an argument, which gets inlined.

- Dependency Confusion: A malicious or compromised third-party npm package could harvest environment variables during the build.

- Misconfigured Bundler Plugins: Plugins like `DefinePlugin` or `dotenv-webpack` can be set to include all environment variables, not just safe ones.

The result? A final, minified JavaScript file delivered to your users that contains live credentials, completely bypassing earlier security gates.

Real-World Impact & Case Studies

Theoretical risks are one thing; real-world breaches are another. The research data paints a clear picture of widespread, high-impact exposure.

| Secret Type | Number Found | Potential Impact | Example Service |

|---|---|---|---|

| Code Repository Tokens | 688 | Full access to private source code, CI/CD pipelines, and linked cloud infrastructure (AWS, SSH). | GitHub, GitLab |

| Project Management API Keys | Multiple | Access to internal tickets, projects, roadmaps, and linked documents. | Linear, Jira, Asana |

| Communication Webhooks | 314 (Slack, Teams, Discord) | Ability to post messages to internal channels, impersonate bots, or trigger automated workflows. | Slack, Microsoft Teams |

| Email/SaaS Platform Keys | Hundreds | Control over mailing lists, campaigns, customer data, and critical business workflows. | SendGrid, Mailchimp, Stripe |

Case Study: The GitLab Token That Opened Everything

One identified exposure involved a GitLab Personal Access Token with `api` and `read_repository` scopes embedded in a JavaScript file. This single token was not just active; it granted access to an organization's entire private codebase. From there, an attacker could:

- Clone any private repository.

- Examine CI/CD configuration files (`.gitlab-ci.yml`) which often contain deployment credentials for AWS, Google Cloud, or databases.

- Trigger pipeline jobs, potentially deploying malicious code or extracting further secrets from the pipeline environment.

This demonstrates the "keys to the kingdom" nature of these leaks. A single front-end secret can unravel multiple layers of security through interconnected services.

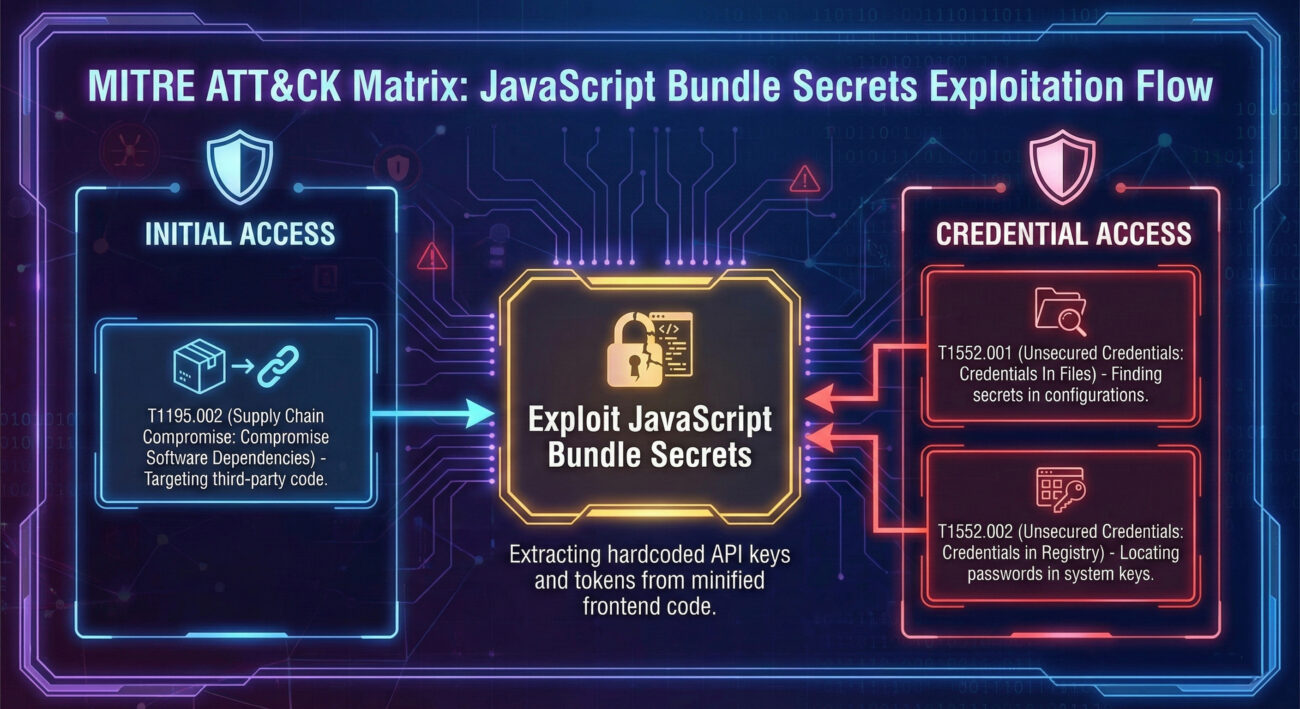

The MITRE ATT&CK Connection

This threat is not an isolated issue but fits squarely into established adversary frameworks. In MITRE ATT&CK, the exploitation of JavaScript bundle secrets maps to specific techniques, primarily under the Credential Access and Initial Access tactics.

The most relevant technique is T1552.001: Unsecured Credentials - Credentials in Files. This technique involves adversaries searching for credential material stored improperly in files. Publicly accessible JavaScript bundles are a prime target.

- Tactic: Credential Access

- Technique ID: T1552.001

- Procedure: An attacker uses automated tools or manually inspects the JavaScript files loaded by a target web application (e.g., by viewing page source or using browser DevTools) to find embedded API keys, tokens, or passwords.

- End Goal: The stolen credentials are used for Initial Access (TA0001) to external services (like cloud platforms, SaaS apps, or source code repos), enabling further intrusion.

Understanding this mapping is crucial for defenders. It allows security teams to prioritize detection rules (e.g., in SIEMs) for outbound requests using internal API keys from client IPs, and to contextualize these leaks as part of a broader attack chain, not just an isolated misconfiguration.

Why Existing Detection Methods Fail

The scale of the problem, 42,000 leaks, exists precisely because conventional security scanners are blind to this specific vector. Here’s a breakdown of the gaps.

The Scanner's Blind Spot

- Traditional Infrastructure Scanners: These tools scan a base URL and known paths. They do not execute a browser, so they never fetch or analyze the linked JavaScript bundle files (e.g., `/assets/index-abc123.js`). The secrets remain invisible.

- Dynamic Application Security Testing (DAST): While DAST tools can spider applications and execute JavaScript, they are often complex, expensive, and reserved for critical apps. They may also lack comprehensive regex patterns for the vast array of possible secret formats (334 types found in research).

- Static Application Security Testing (SAST): SAST excels at scanning source code pre-merge but is ineffective against secrets introduced during the build process from environment variables or CI/CD systems. The secret isn't in the source repo; it's added later.

The Required Solution

- Post-Build, Pre-Deployment Analysis: Scanning the actual build artifacts (the final JS bundles) before they are deployed.

- SPA-Aware Spidering: Using a headless browser to fully render the application, discover all loaded JavaScript files (including those lazy-loaded), and scan their content.

- Comprehensive Secret Pattern Library: Employing an extensive, updatable set of regex and validation checks for hundreds of service providers, not just a handful of common ones.

Step-by-Step: Finding Your Own Bundle Secrets

As a defender, you need to proactively hunt for these exposures. Here is a manual, beginner-friendly audit process you can start today.

Step 1: Map Your Public-Facing Applications

List all your organization's customer-facing web applications, especially single-page applications (SPAs) built with React, Angular, Vue, etc. Don't forget staging and development environments that might be publicly accessible.

Step 2: Capture the JavaScript Bundle Files

For each application, open it in a browser (Chrome/Firefox). Use the Developer Tools (F12):

- Go to the Network tab.

- Refresh the page (Ctrl+F5).

- Filter by JS file types.

- You will see files like `app.[hash].js`, `vendor.[hash].js`, `chunk-[hash].js`. These are your bundles.

- Right-click each JS file and select "Save as..." to download them locally for inspection.

Step 3: Search for Secret Patterns

Open the downloaded files in a powerful text editor (like VS Code) or use command-line tools. Search for common patterns:

# Using grep on the command line (adapt patterns) grep -r -i "api[_-]*key" ./downloaded_js_files/ grep -r "sk_live_" ./downloaded_js_files/ # Stripe Live Secret Key grep -r "eyJhbGciOi" ./downloaded_js_files/ # Base64-encoded JWT tokens grep -r "password.*=.*['\"]" ./downloaded_js_files/ # Assignment patterns

Look for strings that resemble: API keys (32+ hex chars), OAuth tokens, Bearer tokens, database connection strings (`postgres://user:pass@host...`), and service-specific keys (e.g., `AKIA...` for AWS).

Step 4: Validate Findings (CRITICAL)

⚠️ Do NOT test live keys against real services from your IP address. It creates logs and alerts. Instead:

- Use a dedicated, isolated sandbox environment if your company has one.

- For Git tokens, you can carefully use the provider's API with the token from a controlled, non-production environment to check scopes (e.g., `curl --header "Authorization: Bearer TOKEN" https://api.github.com/user`).

- The goal is to confirm if the token is active and understand its permissions.

Step 5: Triage and Remediate Immediately

For any valid, active secret found:

- Rotate/Revoke Immediately: Go to the service (GitHub, AWS, SendGrid, etc.) and revoke the exposed key. Generate a new one.

- Fix the Leak Source: Trace how the secret got into the bundle. Was it a hardcoded value? A misconfigured build plugin? Fix the root cause in the code and CI/CD pipeline.

- Monitor for Unauthorized Use: Check logs of the service associated with the exposed key for any suspicious activity that occurred before rotation.

Common Mistakes & Best Practices

🚫 Common Mistakes That Lead to Exposure

- Using Client-Side Environment Variables Incorrectly: Prefixing variables with `REACT_APP_` (Create React App) or `VITE_` (Vite) and assuming they are safe for any secret. These are embedded at build time and are public.

- Hardcoded Fallback Values: Using syntax like `const key = process.env.KEY || 'hardcoded-key';` as shown earlier.

- Committing Configuration Files: Accidentally committing `.env` files or `config.json` files with secrets to version control, which later get pulled into the build.

- Over-Permissioned Tokens: Using a token with full `repo` or `admin` scope in a front-end context where only a limited scope is needed (if any).

- Ignoring Dependencies: Not auditing third-party npm packages that could be malicious or vulnerable and might access environment variables during bundling.

✅ Best Practices for Secure Front-End Development

- Zero Secrets in Front-End Code: Adopt the absolute rule that no secret, token, or credential can ever be shipped to the client browser. Front-end code must call a backend API (your own server) to perform any authenticated action.

- Use Backend Proxies: If your front-end needs to call a third-party API (e.g., Google Maps, a payment service), create a thin proxy endpoint on your backend. The front-end calls your proxy, and your proxy (which securely holds the API key) makes the call to the third party.

- Secure Build-Time Variables: Only use build-time environment variables for non-secret configuration like public API base URLs, feature flags, or analytics IDs. Treat them as public.

- Implement Pre-Commit & Pre-Build Hooks: Use tools like git-secrets or Gitleaks to prevent secrets from entering the repo. Use TruffleHog in CI to scan for secrets in the codebase and build artifacts.

- Automate Post-Build Scanning: Integrate a dedicated JavaScript bundle secrets scanner into your CI/CD pipeline to analyze the final build output before it's deployed.

Implementation Framework: A 5-Pillar Defense Strategy

To systematically eliminate this risk, move beyond one-off fixes and build a resilient defense-in-depth strategy.

| Pillar | Objective | Tools & Actions | Responsible Team |

|---|---|---|---|

| 1. Prevention (Shift-Left) | Stop secrets from entering the codebase and build process. | Pre-commit hooks (gitleaks), IDE plugins, developer training, secure CI/CD variable management (e.g., GitHub Secrets, GitLab CI Variables). | Development, Platform/SRE |

| 2. Detection (In-Pipeline) | Scan build artifacts for secrets before deployment. | Integrate specialized JavaScript bundle scanners into CI/CD. Use tools like Intruder's SPA scan, custom scripts with TruffleHog, or commercial secrets management solutions. | Security, DevOps |

| 3. Runtime Protection | Monitor for and respond to leaked secrets being used. | Configure alerts in SaaS platforms for token usage from unexpected locations/IPs. Use a secure secrets manager (HashiCorp Vault, AWS Secrets Manager) that provides audit logs. | Security, Cloud Ops |

| 4. Architecture & Design | Design systems where front-ends don't need secrets. | Implement the Backend-for-Frontend (BFF) pattern. Use short-lived, scope-limited tokens (JWTs) issued by your backend for user sessions, not service-level keys. | Architecture, Development |

| 5. Education & Culture | Make security a shared responsibility. | Regular training on secure coding for SPAs. Create clear, accessible documentation on "how to call APIs securely from the front-end." Foster blameless post-mortems for incidents to learn and improve. | Security, Engineering Leadership |

Frequently Asked Questions (FAQ)

Q: Aren't environment variables in my .env file safe? They aren't committed to git.

A: No, not in a front-end context. Build tools like Create React App, Vite, and Next.js embed the values of these environment variables (e.g., `REACT_APP_API_KEY`) directly into the final JavaScript bundle at build time. The resulting `.js` file is served publicly. The safety of your `.env` file is irrelevant once its values are baked into a public file.

Q: I need my front-end to call an external API (like Stripe or a mapping service). How do I do it without an API key?

A: Use a Backend Proxy. Your front-end code should only call your own backend server. Your backend, which can securely store the real API key (using a secrets manager), then makes the request to the external service and relays the response back to the front-end. This keeps the key off the client side entirely.

Q: Can minification or obfuscation protect my secrets?

A: Absolutely Not. Minification and obfuscation are for protecting intellectual property and optimizing code size. They do not encrypt or hide strings. An attacker can still easily search through the minified code for patterns or simply prettify it to make strings plainly visible.

Q: How is this different from a general "hardcoded secret" problem?

A: The key difference is the delivery vector and detection gap. A hardcoded secret in backend source code might be found by SAST. A secret baked into a JavaScript bundle is delivered to every user's browser, making it massively exposed. It also evades traditional infrastructure scanners that don't execute JS, making it a uniquely stealthy and dangerous form of leakage.

Key Takeaways & Call to Action

JavaScript bundle secrets represent a critical and widespread security gap. They bypass traditional "shift-left" controls and evade conventional scanning tools, creating a direct pipeline for attackers to gain initial access to your most sensitive systems.

Your Immediate Action Plan:

- Audit Now: Follow the step-by-step guide above to manually check your most critical public web applications for exposed secrets.

- Fix the Architecture: Mandate that all front-end code must call a backend service for any operation requiring authentication. Eliminate the concept of front-end-held API keys.

- Integrate Specialized Scanning: Augment your CI/CD pipeline with a scanning step designed specifically to analyze built JavaScript bundles. Don't rely solely on SAST or DAST.

- Educate Your Team: Share this article with your development and DevOps teams. Make "no client-side secrets" a non-negotiable principle.

The data is clear: with over 42,000 tokens found, this is not a hypothetical threat. It's an active, ongoing breach happening to thousands of organizations. By understanding the mechanics, the build-time inclusion, the MITRE ATT&CK mapping (T1552.001), and the scanner blind spots, you can move from being a potential victim to a proactive defender.

Start your hunt today. Your secrets are waiting to be found, make sure you find them before the adversary does.

Additional Resources & External Links

Expand your knowledge with these carefully selected resources:

- OWASP Top Ten - Understand the most critical web application security risks.

- MITRE ATT&CK Navigator - Explore the full adversary tactics and techniques framework.

- GitRob - A tool to find sensitive files pushed to public GitHub repositories.

- GitHub Encrypted Secrets Guide - Best practices for managing secrets in GitHub Actions.

- web.dev: Secure JavaScript Bundles - A developer-focused guide from Google.

© 2026 Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.