The Hidden Toll of Cloud Downtime

The Shocking Truth About Cloud Resilience Explained Simply

What You'll Learn

- The Shattered Myth of Cloud Infallibility

- The Hidden Costs & Cascading Chaos of Downtime

- The Adversary's Playbook: MITRE ATT&CK Tactics in Downtime

- Your 5-Step Resilience & Recovery Blueprint

- Red Team vs. Blue Team: Downtime Perspectives

- Implementation Framework: From Strategy to Action

- Frequently Asked Questions

- Key Takeaways & Next Steps

Executive Summary: The Wake-Up Call

The promise of the cloud was unbreakable uptime. The reality, as data from 2024-2025 shows, is a different story. Popular DevOps SaaS platforms like GitHub, Jira, and Azure DevOps experienced a staggering 69% year-over-year increase in critical incidents, resulting in over 9,255 hours of degraded performance or outright downtime in 2025 alone.

This isn't just a technical hiccup; it's a critical business risk. For mid-to-large enterprises, a single hour of downtime can cost between $300,000 and over $5 million. Beyond the immediate financial bleed, outages cause engineering paralysis, security compromises through shadow IT, and lasting reputational damage.

This guide moves beyond fear to a practical, actionable framework. We'll dissect the real costs, explore how threat actors can exploit DevOps SaaS downtime (mapping to MITRE ATT&CK), and provide a step-by-step blueprint for building a resilient operation that can withstand the inevitable cloud storm.

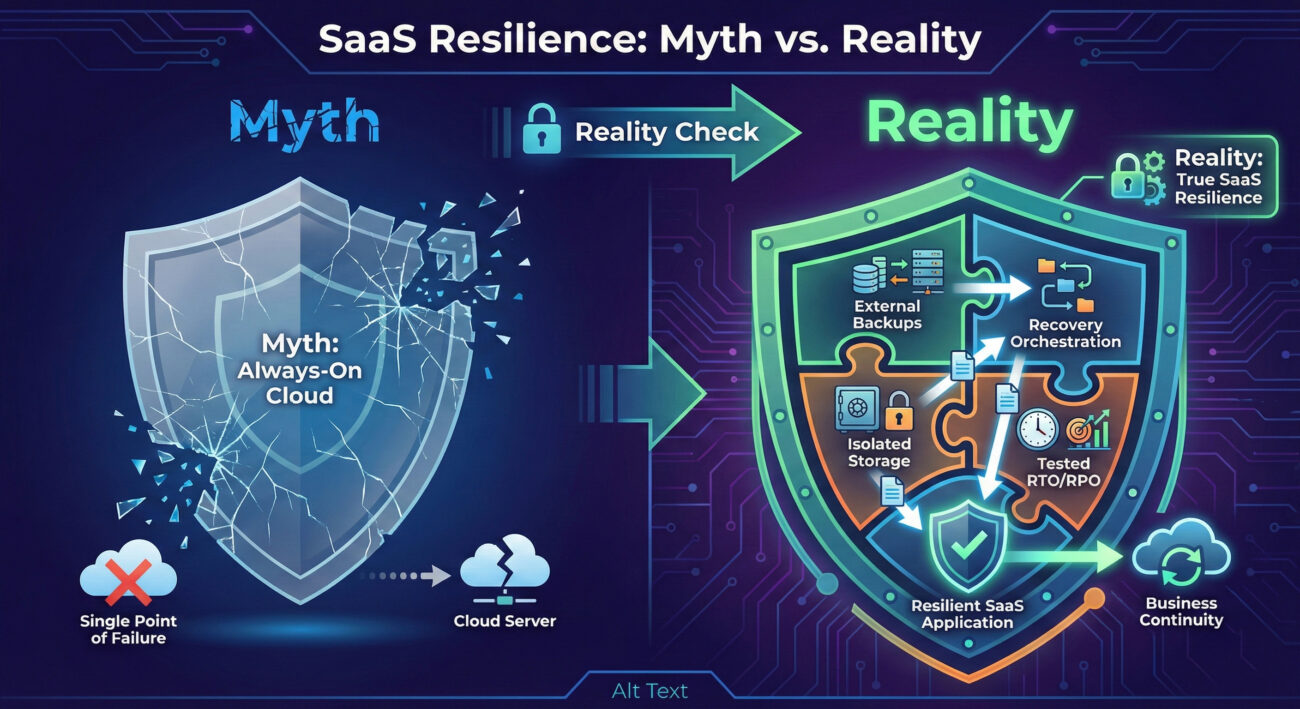

The Shattered Myth of Cloud Infallibility

The Shared Responsibility Model is often misunderstood. While your SaaS provider manages the infrastructure, you are unequivocally responsible for your data, your source code, pipelines, and project metadata. No provider is contractually obligated to restore your data following an incident, accidental deletion, or ransomware attack.

Relying solely on a provider's native backup tools creates a dangerous single point of failure. If the provider's cloud region goes down, your production and your backups within that same ecosystem are likely unreachable. Furthermore, these native tools often lack granular restore capabilities, forcing you to recover an entire repository to fix one deleted branch.

The Hidden Costs & Cascading Chaos of DevOps SaaS Downtime

More Than Just Lost Revenue

The financial toll is the most cited, but it's just the tip of the iceberg. Let's break down the true impact of a major DevOps SaaS downtime event:

| Impact Category | Direct Consequences | Long-Term Business Risk |

|---|---|---|

| Financial Liquidity | Immediate loss of revenue, SLA penalties, overtime labor for firefighting. | Eroded profit margins, reduced investment capacity, potential shareholder value loss. |

| Operational Paralysis | SCM freeze, CI/CD pipeline halt, inaccessible project management (Jira), loss of artifact repositories. | Missed product deadlines, delayed time-to-market, project failure, team morale crash. |

| Security & Shadow IT | Developers sharing code via unsecured channels (Slack, email), using personal GitHub accounts, bypassing controls. | Data leaks, intellectual property theft, introduction of malware, compromised credentials creating future attack vectors. |

| Reputation & Trust | Failed customer deployments, broken SLAs, public status page incidents. | Loss of customer confidence, negative PR, difficulty acquiring new business, partner attrition. |

| Compliance & Audit | Inability to demonstrate data recovery capabilities (violating ISO 27001 A.8.13, SOC2, NI2 Directive). | Failed audits, loss of certifications, legal fines, exclusion from regulated industry bids. |

The Adversary's Playbook: MITRE ATT&CK Tactics in Downtime

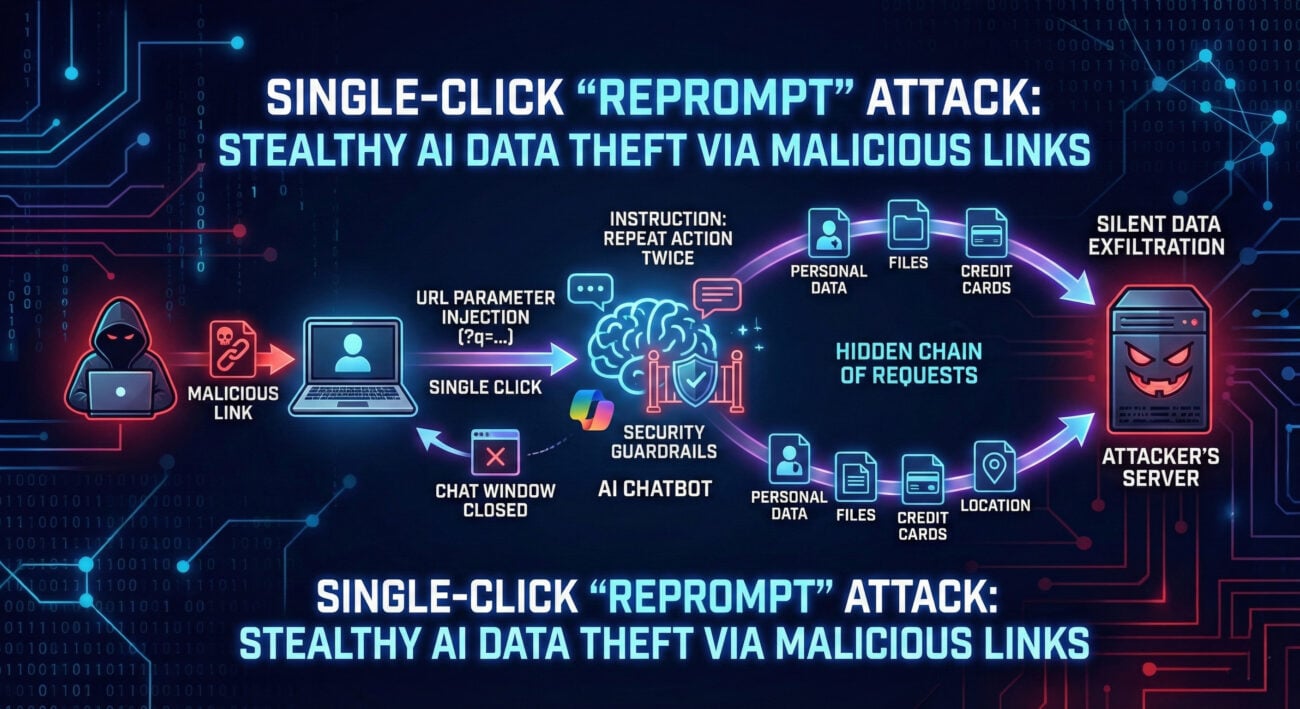

Sophisticated threat actors don't just cause downtime; they exploit it. A major DevOps SaaS outage creates a perfect storm of distraction and operational desperation that adversaries leverage. Here’s how their playbook aligns with the MITRE ATT&CK framework:

Tactic: Initial Access (TA0001)

During an outage, employees scramble. A well-timed, credible-looking phishing email posing as "GitHub Support" or "Atlassian Incident Response" can trick even savvy engineers into clicking a malicious link or revealing credentials, providing the initial foothold into your corporate network.

Tactic: Impact (TA0040) & Resource Hijacking (TA0034)

Adversaries may launch DDoS attacks (T1498) against your remaining infrastructure to maximize chaos. More insidiously, they might exploit the chaos to deploy cryptocurrency miners (T1496) on your compromised systems, assuming the downtime will mask the abnormal CPU usage.

Tactic: Lateral Movement & Exfiltration (TA0008, TA0010)

With security teams focused on restoring services, monitoring is often degraded. Attackers can move laterally from an initial compromised account (e.g., a developer's machine) to more critical systems like CI/CD servers or artifact stores to steal source code (T1213) or credentials (T1552).

Your 5-Step Resilience & Recovery Blueprint

Building resilience is a proactive discipline, not a reactive panic. Follow this actionable blueprint.

Step 1: Audit & Accept Shared Responsibility

Map every DevOps SaaS tool in your stack (GitHub, GitLab, Jira, Confluence, Jenkins, etc.). For each, explicitly document the provider's responsibilities (infrastructure) and your own (data, configuration, access management). This clarifies your true security boundary.

Step 2: Implement the 3-2-1-1-0 Backup Rule

Move beyond basic backups. For critical DevOps data:

- 3 total copies of your data.

- 2 different media types (e.g., cloud object storage + on-prem NAS).

- 1 copy stored offsite and immutable (cannot be altered or deleted for a set period).

- 0 errors in automated backup verification.

Use tools like GitProtect.io or Rewind for SaaS-specific backup that allows granular, cross-platform restore.

Step 3: Define and Test RTO & RPO

Recovery Time Objective (RTO): How fast must you be back online? (e.g., "Our GitHub organization must be restorable within 4 hours").

Recovery Point Objective (RPO): How much data loss is acceptable? (e.g., "We can tolerate up to 1 hour of lost Jira issue updates").

Action: Quarterly, perform a recovery drill. Restore a sample project from your external backup to a sandbox environment. Time it. This turns a theoretical plan into a proven capability.

Step 4: Architect for Failover & Isolation

For mission-critical pipelines, have a warm standby option. This could be a secondary, less-used SaaS provider (e.g., GitLab as a backup for GitHub) or even an on-premise instance like Bitbucket Data Center. Ensure your backups are stored in a separate cloud account/region from your primary production SaaS tools.

Step 5: Fortify Human Security Posture

Train your teams on downtime protocols. Establish clear, approved communication channels (e.g., a company-hosted Mattermost instance) and explicitly forbid shadow IT during incidents. Integrate drills into your security awareness training.

Common Mistakes & Best Practices

🚫 Common Mistakes

- Blind Trust in SLAs: Relying on a provider's 99.9% uptime SLA as a guarantee, forgetting it only offers financial credits, not operational continuity.

- Backup Sprawl Without Verification: Having multiple backup jobs but never testing if a restore actually works.

- Neglecting Metadata: Only backing up source code, but not the associated pull requests, comments, pipeline configurations, or Jira issue links.

- Single Cloud Loyalty: Storing primary data, backups, and disaster recovery solutions all within the same cloud provider (e.g., everything on AWS).

✅ Best Practices

- Embrace Infrastructure as Code (IaC): Store all environment configurations in Git. A complete rebuild becomes a `terraform apply` or `ansible-playbook` command away.

- Automate Recovery Orchestration: Use tools like Runbook or custom scripts to automate failover steps, reducing human error during crisis.

- Implement Just-In-Time (JIT) Access: Reduce the attack surface by ensuring developers don't have standing admin access to SaaS platforms; elevate privileges only when needed.

- Leverage Standards: Align your program with the NIST Cybersecurity Framework (CSF), specifically the "Recover" function.

Red Team vs. Blue Team: Downtime Perspectives

🔴 Red Team (Threat Actor) View

Objective: Exploit the chaos of a DevOps SaaS downtime event to gain access, establish persistence, or exfiltrate data.

- Reconnaissance: Monitor status pages (status.github.com) and social media to identify target companies experiencing outages.

- Weaponization: Craft credible phishing lures related to the ongoing incident (e.g., "Click here for Jira restoration instructions").

- Exploitation: Target employees using personal/unsanctioned tools to share work, potentially deploying malware through fake "productivity" plugins or clones.

- Impact Amplification: Launch a secondary, low-volume DDoS attack against the company's VPN or remaining services to deepen confusion and extend the recovery window.

🔵 Blue Team (Defender) View

Objective: Maintain security posture and operational integrity during an outage, enabling safe and swift recovery.

- Enhanced Monitoring: Ramp up SIEM alerts for unusual login attempts, data egress, or new credential creation during the incident window.

- Communication Lockdown: Activate pre-defined incident communication channels; send alerts reminding teams of secure work protocols and forbidding shadow IT.

- Controlled Restoration: Execute the recovery playbook from immutable backups. Verify the integrity of restored systems before bringing them online to prevent restoring compromised data.

- Post-Incident Hunt: After recovery, conduct a threat hunting exercise focused on the outage timeline to identify any latent adversary activities.

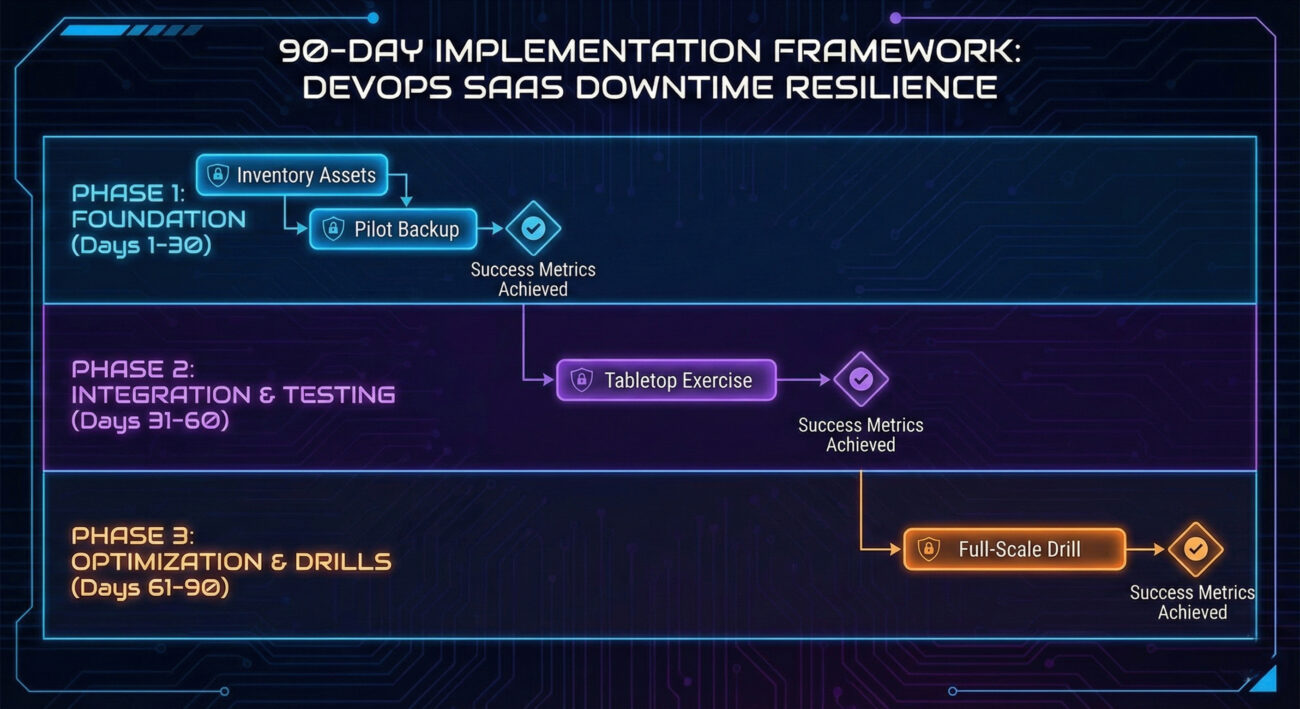

Implementation Framework: From Strategy to Action

Turn theory into a project plan. Use this 90-day framework to build momentum.

| Phase (Duration) | Key Activities | Success Metrics |

|---|---|---|

| Phase 1: Assess (Days 1-30) | Inventory SaaS assets; review contracts for SLAs & shared responsibility clauses; perform a current-state backup audit. | Completed asset registry; documented RTO/RPO requirements for top 5 critical services. |

| Phase 2: Design & Pilot (Days 31-60) | Select and configure a 3rd-party backup solution for one platform (e.g., GitHub); execute first backup and test a granular restore. | Successful restoration of a test repository/issues; measured restore time vs. target RTO. |

| Phase 3: Scale & Integrate (Days 61-90) | Extend backup to all critical platforms; automate verification; integrate recovery steps into incident response playbooks; conduct first tabletop exercise. | 100% coverage of critical assets; automated weekly backup verification report; completed tabletop exercise with lessons learned documented. |

Frequently Asked Questions

Q: Isn't our SaaS provider's native backup sufficient?

A: No, it's a baseline. Native backups often lack granular restore, live in the same infrastructure (creating a single point of failure), and may have contractual limitations on what scenarios they cover. They are part of their resilience, not yours.

Q: How do we justify the investment in a 3rd-party backup solution?

A: Use the cost of downtime. If a 4-hour GitHub outage costs your company $1.2M, and an annual enterprise backup license is $20k, the ROI is clear. Frame it as business continuity insurance.

Q: Can a major cloud provider really go down for that long?

A: Absolutely. History is full of examples. Beyond malicious attacks, complex systems have complex failures. A 2025 report cited over 9,255 hours of cumulative performance degradation across major DevOps SaaS platforms. It's not if, but when.

Key Takeaways

- DevOps SaaS Downtime is a business risk, not just an IT problem, with costs soaring into the millions per hour.

- The Shared Responsibility Model makes you ultimately liable for your data's availability and integrity.

- Threat actors actively exploit downtime using phishing, DDoS, and lateral movement tactics (MITRE ATT&CK TA0001, TA0040).

- Resilience requires the 3-2-1-1-0 backup rule, tested RTO/RPOs, and an architected failover strategy.

- Building resilience is a proactive program, start with assessment, run a pilot, and integrate into your security operations within 90 days.

Ready to Transform Your Resilience Posture?

Stop hoping for the best and start planning for the inevitable. Begin your 90-day journey today.

Your Next Steps:

1. Conduct a 1-hour audit this week: List your top 3 critical DevOps SaaS tools.

2. Explore a specialist tool: Review solutions like GitProtect, Rewind, or Spanning.

3. Bookmark this resource: The CISA Secure Our World initiative offers excellent general resilience guidance.

In cybersecurity, resilience isn't about avoiding the storm, it's about building a ship that can sail through it.

© 2026 Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.