OpenAI introduces ads for free U.S. ChatGPT users

Your Data Privacy Guide for 2026 Explained Simply

In a significant shift, OpenAI has announced it will begin showing advertisements within ChatGPT to logged-in adult users in the United States. This move introduces a new dynamic between free AI accessibility and user data privacy. While OpenAI promises that "your data and conversations are protected" and that ads will not influence chatbot responses, cybersecurity professionals must scrutinize the implications. This guide provides a comprehensive analysis of the new ChatGPT advertising security model, offering actionable steps to safeguard your information in this evolving landscape.

Table of Contents

- 1. The New Frontier: AI Chatbots Meet Ad-Supported Models

- 2. Behind the Privacy Promise: What OpenAI Says vs. What It Collects

- 3. The Ad Tech Engine: How Targeted Advertising Really Works

- 4. Step-by-Step: Auditing Your ChatGPT Privacy & Security Settings

- 5. Common Mistakes & Best Practices for AI Chat Privacy

- 6. Red Team vs. Blue Team: Ad-Supported AI Security Perspectives

- 7. Frequently Asked Questions (FAQ)

- 8. Key Takeaways & Action Plan

1. The New Frontier: AI Chatbots Meet Ad-Supported Models

OpenAI’s announcement marks a pivotal moment for the AI industry. With over 800 million weekly active users as of late 2025, ChatGPT is transitioning from a primarily subscription-based service to one that incorporates advertising for its free and low-cost 'Go' tiers. This directly addresses the challenge of serving a massive user base that desires powerful AI but is unwilling or unable to pay a subscription fee.

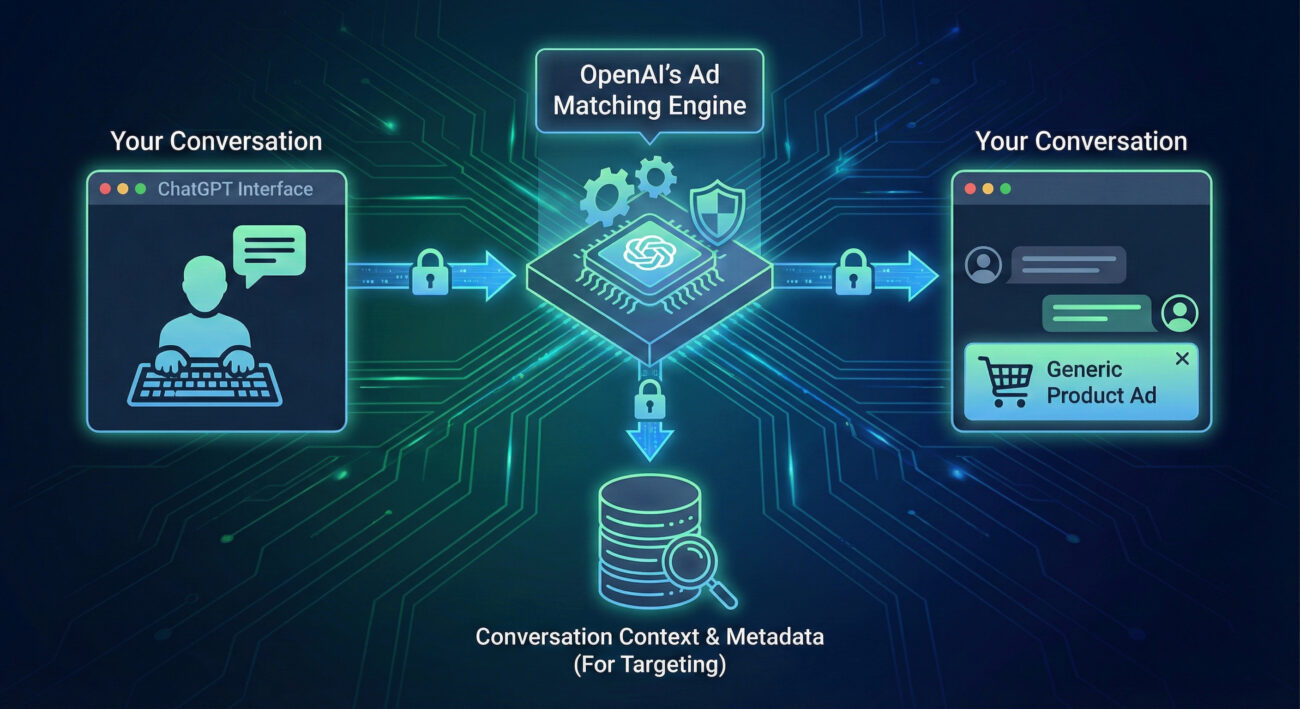

The core of the model is "conversation-relevant" advertising. Ads will appear at the bottom of the chat interface, theoretically based on the context of your current dialogue. Crucially, OpenAI states that ads will not be shown to users under 18, nor near sensitive topics like health or politics. For cybersecurity, the primary concern shifts from a pure subscription attack surface to a hybrid model where data collection for ad targeting becomes a new vector for potential privacy invasion.

This approach is not isolated. Google is testing similar integrations within its AI-powered Search. The trend signifies that ad-supported AI will be a dominant model, making it essential for users to understand the associated security risks and for defenders to adapt their strategies accordingly.

2. Behind the Privacy Promise: What OpenAI Says vs. What It Collects

OpenAI's public statements emphasize strong privacy protections: "your data and conversations are protected and never sold to advertisers." However, a critical gap exists in the announcement: OpenAI did not detail exactly what data it will collect on users to serve relevant ads. This lack of granularity is a classic red flag in ChatGPT advertising security analysis.

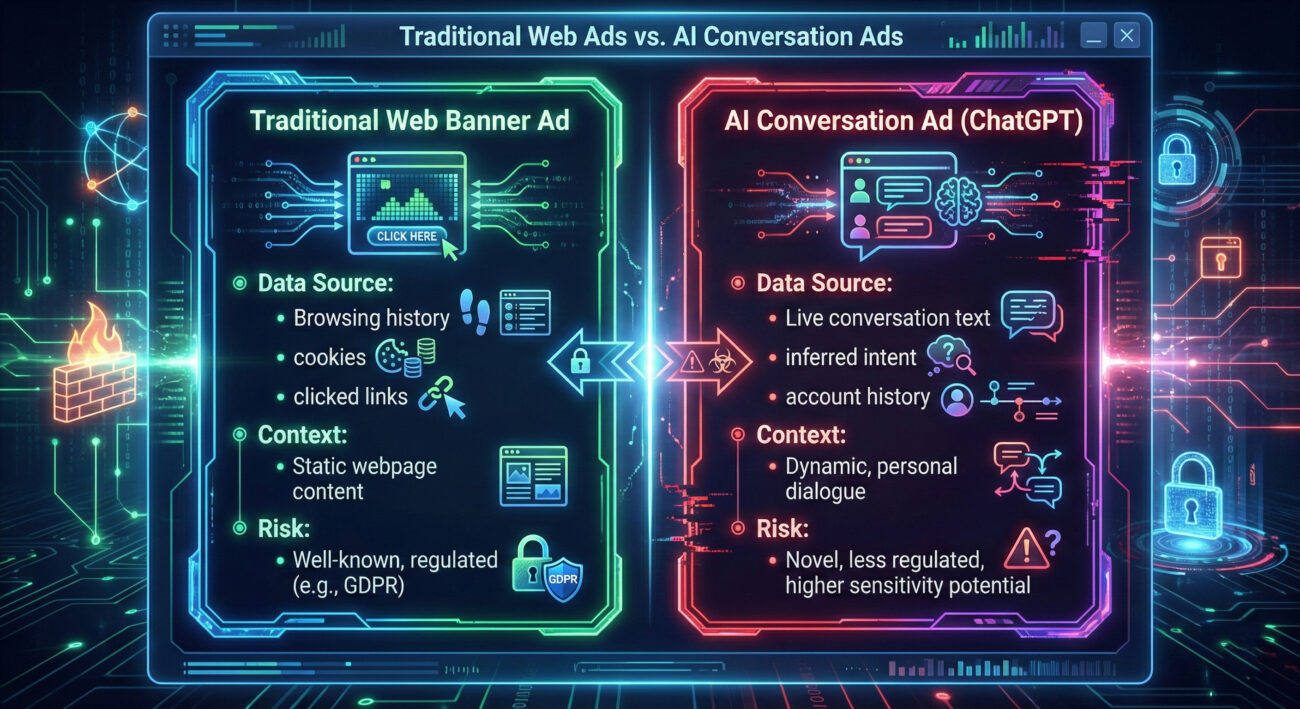

To serve a "relevant" ad, a system typically needs data. This could range from low-risk metadata (session length, general topic category inferred from the chat) to high-risk personal data (specific keywords, inferred intent, linked account information from a logged-in session). The practice of analyzing user conversation to infer interests for commercial purposes aligns with MITRE ATT&CK technique T1596.005 - Search Victim-Owned Websites, which involves gathering information about a target's interests. While OpenAI is not a threat actor in this context, the technique of information gathering is conceptually similar.

The security controls offered are "ad personalization" toggle and ad feedback tools. While useful, these are post-hoc controls. The fundamental act of data processing for ad matching occurs before a user can opt-out. This model creates an inherent tension: the system must analyze your conversation to determine if you should see an ad and which one, yet promises that the conversation content itself is "protected." Understanding this nuance is key to managing your digital footprint.

| Potential Data Point | Risk Level for Privacy | Likely Use in Ad Targeting |

|---|---|---|

| Conversation Keywords (e.g., "best laptop", "vacation ideas") | High | Direct intent signaling for product/service ads. |

| Session Metadata (Time, date, length, device type) | Medium | Context for ad relevance (time of day, user engagement level). |

| Account Information (Email, region if provided) | High | Demographic targeting and cross-service profiling. |

| Inferred Topics (AI-categorized chat subject: "Technology", "Travel") | Medium | Broad category-based ad matching. |

3. The Ad Tech Engine: How Targeted Advertising Really Works

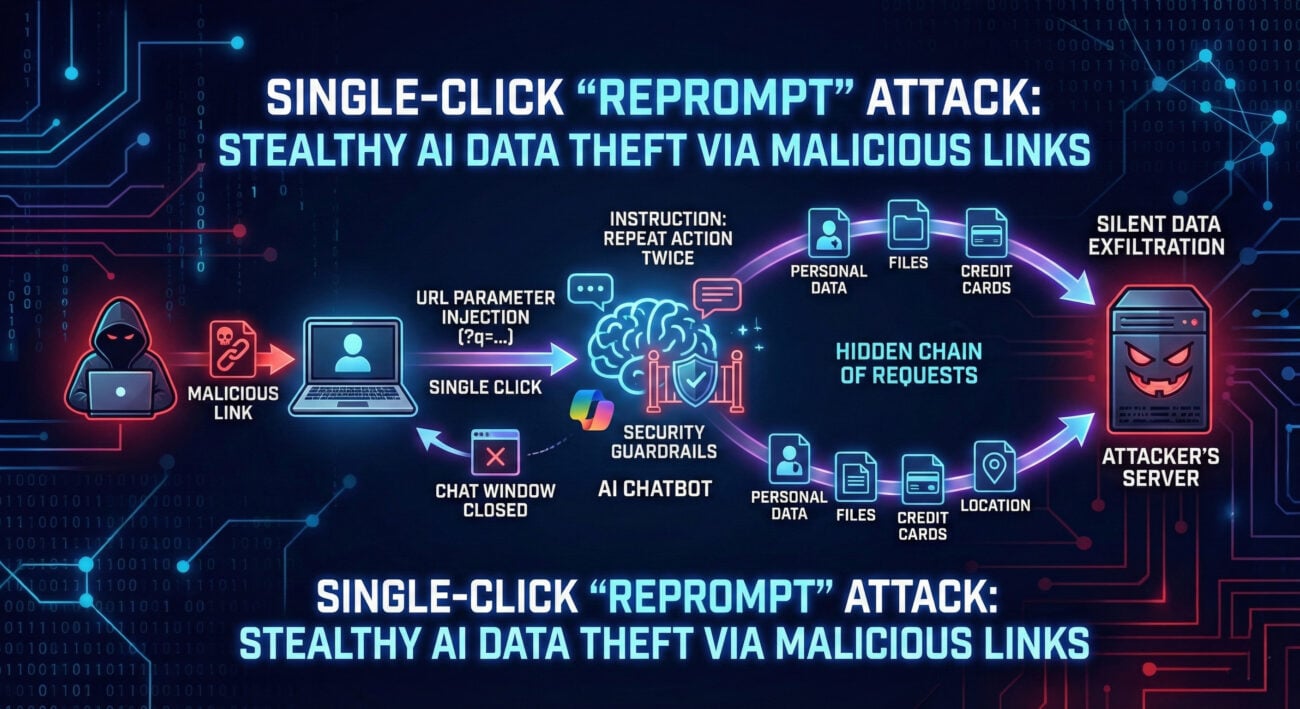

To fully grasp ChatGPT advertising security implications, one must understand the standard online advertising ecosystem. Targeted ads are not magic; they are the result of complex data pipelines. Even if OpenAI does not "sell" data, the internal system that powers ads must create a user profile or signal that is matched against advertiser criteria.

This process often involves Real-Time Bidding (RTB), where an ad impression (the chance to show an ad) is auctioned off in milliseconds as a webpage, or in this case, a chat response, loads. For this to be "relevant," a packet of data about the user and context is sent to potential advertisers. A breach or leak in this automated bidding system could expose these data packets. Furthermore, persistent tracking across the web often relies on identifiers like cookies or device fingerprints. A logged-in ChatGPT session provides a stable, unique identifier, your account, potentially making cross-service tracking more accurate unless explicitly prevented by robust isolation.

4. Step-by-Step: Auditing Your ChatGPT Privacy & Security Settings

Proactivity is your best defense. Follow this actionable guide to lock down your ChatGPT advertising security and privacy settings once the ad rollout begins.

Step 1: Locate the Ad Personalization Control

Immediately upon the feature's launch, log into your ChatGPT account. Navigate to Settings > Privacy or a new "Advertising" section. Look for a toggle labeled "Ad Personalization," "Use conversation to improve ads," or similar. This is your primary control.

Step 2: Disable Ad Personalization

Turn this setting OFF. This should instruct the system not to use your conversation data for tailoring ads. Note: You may still see generic, non-personalized ads, but the risk of sensitive data being used in the targeting process is reduced.

Step 3: Review Linked Accounts & Data

Check Settings > Data Controls. Review any history of conversations you have saved. For maximum privacy, disable chat history. This not only prevents ads from using past conversations but also aligns with best practices for not feeding sensitive data into AI models.

Step 4: Use the Ad Feedback Tool

If you see an ad, use the provided "Why this ad?" or feedback tool. This serves two purposes: it helps you understand what data triggered the ad, and it signals to the system when targeting is off, potentially improving its security and relevance algorithms.

Step 5: Consider the Tier Upgrade (If Feasible)

Evaluate if an upgrade to ChatGPT Plus, Pro, Business, or Enterprise is worthwhile for your use case. These tiers are explicitly excluded from seeing ads. This is the most effective, though costly, technical control to eliminate the attack vector entirely.

5. Common Mistakes & Best Practices for AI Chat Privacy

🚫 Common Mistakes to Avoid

- Assuming "No Sale" Means "No Use": Believing that because data isn't sold, it isn't analyzed internally for ad profit. Data can be exploited without being transferred to a third party.

- Discussing Sensitive Topics in Ad-Supported Tiers: Using the free/Go tier for conversations about health, finance, or confidential work projects, even if ads are "not eligible" near such topics. The analysis still occurs.

- Ignoring the Settings Menu: Never checking the privacy settings after a major update like an ad rollout, leaving default (often data-sharing-friendly) options enabled.

- Using the Same Credentials Everywhere: Having your ChatGPT login email and password reused on other sites. A breach elsewhere could compromise your AI chat account and its associated data.

✅ Best Practices to Adopt

- Implement Role-Based Usage: Use a paid, ad-free tier for sensitive or professional work. Use the free tier for general, non-sensitive inquiries.

- Enable Multi-Factor Authentication (MFA): Secure your account with MFA. This prevents unauthorized access to your chat history and personal data, which could be mined for ad targeting or worse.

- Regularly Clear Chat History: If you don't need it, turn off chat history or periodically delete it. This limits the longitudinal profile that can be built about you.

- Stay Informed on Policy Changes: Subscribe to official blogs or forums. OpenAI's Privacy Policy and Terms of Use are the legal bedrock; check them after major announcements.

- Use a Dedicated Email: Consider using a separate email address for your ChatGPT account to compartmentalize your digital identity and make cross-service tracking harder.

6. Red Team vs. Blue Team: Ad-Supported AI Security Perspectives

Red Team (Threat Actor) View

Opportunity: A new, vast data source. The ad targeting system becomes a high-value target. Attackers might look for:

- API Vulnerabilities: Flaws in the ad-serving API that could allow injection of malicious ads (malvertising) or exfiltration of user context data packets.

- Inference Attacks: Even without a direct breach, carefully crafted user conversations could "query" the ad system to deduce what information it holds about users or its internal logic.

- Social Engineering: Ads mimicking official OpenAI communications to phish credentials. A user might confuse a sponsored result for a legitimate system message.

Goal: Exploit the new complexity and data flows introduced by the advertising backend to steal data, spread malware, or erode trust in the platform.

Blue Team (Defender) View

Challenge: Securing a new, real-time subsystem without compromising performance or privacy promises. Key actions include:

- Strict Data Isolation: Implementing encrypted, logical "firewalls" between the core chat processing model and the ad-matching engine to prevent data leakage.

- Robust Ad Review: Establishing a secure vetting process for all advertisers and ad creatives to prevent malvertising campaigns.

- Enhanced Monitoring: Deploying anomaly detection on the ad-serving infrastructure to spot unusual data access patterns or spikes in feedback, which could signal an active attack.

- User Education: Clearly labeling ads and providing easy-to-use privacy controls is a primary defense layer.

Goal: Ensure the ad-supported model is sustainable not just economically, but also from a security and trust perspective, protecting both user data and platform integrity.

7. Frequently Asked Questions (FAQ)

Q1: If I turn off ad personalization, will OpenAI still analyze my chats for ads?

A: The technical specifics are not yet public. Ideally, turning off personalization should mean your conversation text is not processed by the ad-targeting model at all. However, you may still receive generic, context-free ads. The privacy policy should clarify this post-launch.

Q2: Could malicious actors buy ads to target specific individuals or spread malware?

A: This is a significant risk, known as "malvertising." It depends entirely on the strength of OpenAI's advertiser onboarding and ad content review processes. A strong defense requires rigorous identity verification and continuous scanning of ad assets, similar to practices by major ad platforms like Google Ads.

Q3: How does this relate to data protection laws like GDPR or CCPA?

A: These laws grant users rights over their data. OpenAI's rollout initially targets U.S. adults, but if expanded, it must comply with GDPR's strict consent requirements for profiling. The "legitimate interest" basis often used for ads may be challenged when the data source is intimate conversation. Users should have clear rights to opt-out and access/delete data used for advertising.

Q4: Are my private Enterprise or Team subscription chats safe from this?

A: According to the announcement, Yes. OpenAI explicitly states that Plus, Pro, Business, and Enterprise tiers will not see ads. Furthermore, data from these tiers is typically governed by stricter terms, often guaranteeing it is not used for model training, a policy that would logically extend to advertising.

8. Key Takeaways & Action Plan

The integration of ads into ChatGPT is a business reality, but your security and privacy remain in your control. Here is your concise action plan:

- Audit Settings Immediately: When ads go live, find and disable "Ad Personalization" in your ChatGPT settings as your first action.

- Classify Your Chats: Be mindful of the information you share in ad-supported tiers. Treat the chat like a public forum, avoid sensitive details.

- Strengthen Account Security: Enable Multi-Factor Authentication (MFA) on your OpenAI account to prevent unauthorized access.

- Stay Updated: Bookmark key resources like OpenAI's Privacy Policy and follow reputable security news sources like Krebs on Security or Dark Reading for analysis on emerging threats.

- Consider the Upgrade: For professionals, researchers, or anyone handling confidential data, investing in a paid, ad-free tier is the most straightforward and effective secure choice.

The era of ad-supported AI requires a new layer of user vigilance. By understanding the mechanics of ChatGPT advertising security and implementing these practical defenses, you can continue to harness the power of AI while proactively protecting your digital privacy.

© 2026 Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.