Critical Vulnerabilities in Anthropic's MCP Git Server Allow File Access and Code Execution

Critical AI Security Flaws & Protection Guide Explained Simply

In the rapidly evolving landscape of AI-integrated development, a critical security flaw recently came to light. Researchers discovered not one, but three severe vulnerabilities in Anthropic's official Git Model Context Protocol (MCP) server. These MCP server vulnerabilities (CVE-2025-68143, CVE-2025-68144, CVE-2025-68145) created a perfect storm, allowing attackers to read sensitive files, delete data, and ultimately execute malicious code on vulnerable systems. This incident serves as a stark warning about the security risks in the AI toolchain and underscores why every developer and security professional must understand the mechanics of such attacks.

Table of Contents

- Executive Summary: The Core of the MCP Server Breach

- Vulnerability Deep Dive: The Three Flaws Explained

- Real-World Attack Scenario: From Prompt to Payload

- Step-by-Step Attack Chain Analysis

- MITRE ATT&CK Mapping: Understanding the Adversary Playbook

- Red Team vs. Blue Team Perspective

- Mitigation Strategies & Best Practices

- Common Mistakes & Proactive Security

- Frequently Asked Questions (FAQ)

- Key Takeaways & Conclusion

Executive Summary: The Core of the MCP Server Breach

MCP (Model Context Protocol) servers act as bridges, allowing Large Language Models (LLMs) to interact with external tools and data sources, like Git repositories. The vulnerabilities in Anthropic's mcp-server-git package stemmed from a fundamental failure to properly validate and sanitize user input before passing it to system-level commands. This is a classic security failure with modern AI-era consequences.

The impact was severe: a remote attacker could leverage prompt injection, such as through a malicious README file or issue comment that an AI assistant processes, to trigger these flaws. This means no direct network access to the victim's machine was needed. By chaining the three vulnerabilities, an attacker could achieve Remote Code Execution (RCE), gaining full control over the server environment. The affected package, being the "canonical" reference implementation, meant these MCP server vulnerabilities posed a systemic risk to the entire emerging MCP ecosystem.

Vulnerability Deep Dive: The Three Critical MCP Server Vulnerabilities Explained

Let's break down each of the three CVEs to understand the exact technical missteps. This clarity is crucial for both identifying similar flaws in other code and for effective defense.

CVE-2025-68143: The Path Traversal in `git_init`

This was the initial foothold. The git_init tool accepted a user-supplied path to initialize a new Git repository but performed no validation on that path.

Technical Behavior: An attacker could provide a path like ../../../etc/passwd. The server would then attempt to create a .git folder and structure within a sensitive system directory, potentially corrupting critical files or preparing the ground for further exploitation. The core issue was the lack of path normalization and restriction to intended working directories.

CVE-2025-68144: Argument Injection in Git Commands

This flaw turned a capability into a weapon. The git_diff and git_checkout functions took user-controlled arguments and appended them directly to git CLI commands without sanitization.

Technical Behavior: Imagine an AI assistant is asked to "checkout the branch described in this issue." If the issue contains the text main -- --output=/tmp/payload.sh, the server might execute git checkout main -- --output=/tmp/payload.sh. The -- argument, interpreted by Git, could be misused to write or manipulate files in unintended ways, leading to data loss or manipulation.

CVE-2025-68145: Path Traversal via the `--repository` Flag

This vulnerability bypassed intended restrictions. The server had a --repository flag to limit operations to a specific repo path, but the validation was insufficient.

Technical Behavior: An attacker could specify a repository path like /intended/repo/../../../etc. The validation might only check that the path started with /intended/repo/, but the subsequent traversal sequences (../) would allow operations to "escape" and target any other repository or directory on the filesystem, violating the security boundary.

| CVE Identifier | CVSS v3 Score | Type | Affected Component | Root Cause |

|---|---|---|---|---|

| CVE-2025-68143 | 8.8 (High) | Path Traversal | git_init tool |

Missing path validation during repo creation |

| CVE-2025-68144 | 8.1 (High) | Argument Injection | git_diff, git_checkout |

Unsantized user input passed to Git CLI |

| CVE-2025-68145 | 7.1 (High) | Path Traversal | --repository flag logic |

Insufficient path sanitization for flag |

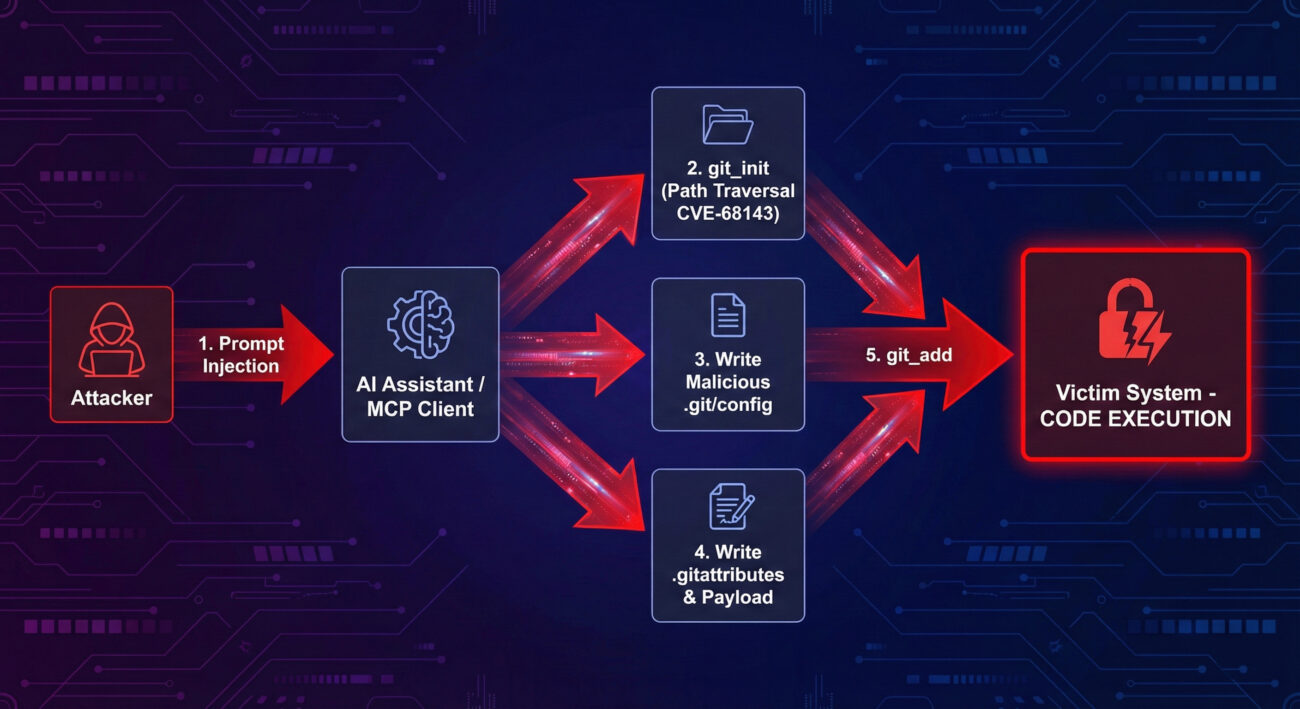

Real-World Attack Scenario: From Prompt Injection to System Compromise

How would these theoretical flaws be used in a real attack? The research by Cyata outlined a chained exploit leveraging the Filesystem MCP server alongside the Git server.

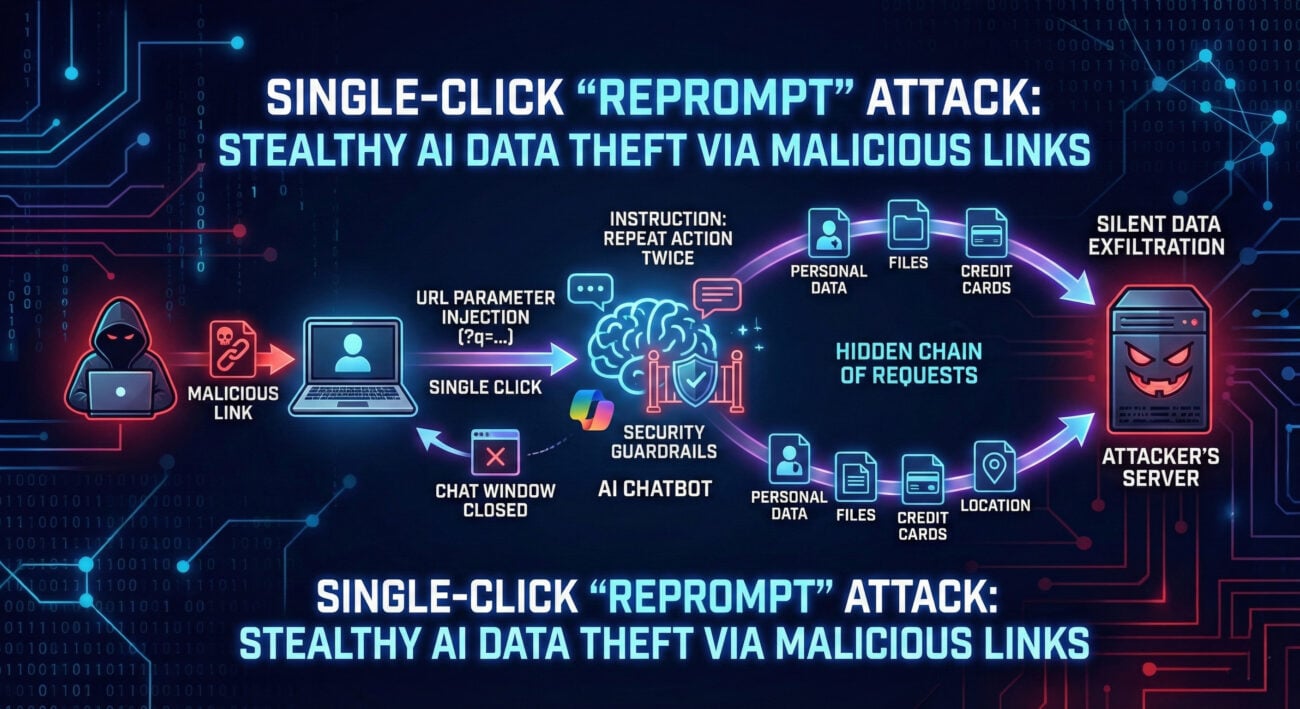

The Attack Vector: The entry point is prompt injection. An attacker plants malicious instructions in a location an AI assistant will read, a poisoned commit message, a malicious issue, or even a webpage the LLM is prompted to summarize. These instructions are crafted to trigger the vulnerable MCP tools.

The Goal - RCE: The endgame is to abuse Git's "clean filter" mechanism. Filters are scripts Git can run automatically when adding files to the repository. By writing a malicious filter script and a .gitattributes file to trigger it, the attacker can execute arbitrary code the moment the victim (or the AI agent acting on their behalf) runs a simple git add command.

Step-by-Step Attack Chain Analysis

Here is the detailed kill chain, showing how an attacker could sequentially exploit these MCP server vulnerabilities.

Step 1: Establish a Foothold with `git_init`

Using prompt injection, the attacker tricks the AI into calling git_init with a path to a writable directory on the victim's system (e.g., /tmp/attack). Due to CVE-2025-68143, this works even if the path is outside intended bounds, creating a Git repository the attacker can target.

Step 2: Weaponize the Git Configuration

The attacker then uses the Filesystem MCP server (or other means) to write a malicious .git/config file into the newly created repository. This configuration defines a "clean" filter that points to a shell script they will deploy in the next step.

Step 3: Deploy the Payload

Next, the attacker writes the actual payload, a shell script (e.g., payload.sh) that will be executed. They also write a .gitattributes file that associates a specific file extension (like .trigger) with the malicious clean filter defined in Step 2.

Step 4: Set the Trap

The attacker creates a file with the triggering extension (e.g., exploit.trigger) in the repository. The mere existence of this file is not enough; it needs to be staged.

Step 5: Trigger Execution with `git_add`

Finally, the attacker prompts the AI to add the file to the repository (e.g., "please add the exploit.trigger file"). When the victim's system runs git add exploit.trigger, Git sees the clean filter in .git/config, executes the specified malicious shell script, and grants the attacker Remote Code Execution.

MITRE ATT&CK Mapping: Understanding the Adversary Playbook

Framing these MCP server vulnerabilities within the MITRE ATT&CK® framework helps defenders map the techniques to their own detection and mitigation strategies. This attack employs several key techniques:

- T1190: Exploit Public-Facing Application – The MCP server, though often behind an AI interface, is the application being exploited via its API.

- T1059: Command and Scripting Interpreter – The ultimate goal is execution of shell commands/scripts via the Git filter mechanism.

- T1221: Template Injection – This aligns closely with prompt injection, where untrusted input (the prompt) is interpreted and executed in a trusted context (the AI's tool call).

- T1552.002: Unsecured Credentials - Credentials in Files – Path traversal could be used to access sensitive

.git/configfiles or other configuration files containing secrets from other repositories. - T1574: Hijack Execution Flow – Abusing Git's clean filter is a direct hijacking of a legitimate workflow (

git add) to execute malicious code.

Understanding this mapping allows Blue Teams to hunt for related activity, such as unusual child processes spawned from Git operations or anomalous file writes to .git/config.

Red Team vs. Blue Team: Perspectives on the MCP Server Vulnerabilities

Red Team (Attack) Perspective

Opportunity: These vulnerabilities are a gold mine. They are exploitable via indirect input (prompt injection), making attribution and initial detection difficult. Chaining them leads directly to high-value RCE.

- Initial Focus: Identify applications using vulnerable versions of

mcp-server-git. Look for AI/LLM interfaces that handle Git operations. - Exploitation Path: Craft convincing, obfuscated prompts that trick the AI into making the specific MCP tool calls. Test payloads that work within argument/character limits of the injection point.

- Post-Exploitation: The Git repository created during the attack provides excellent persistence and a "legitimate"-looking location to hide payloads.

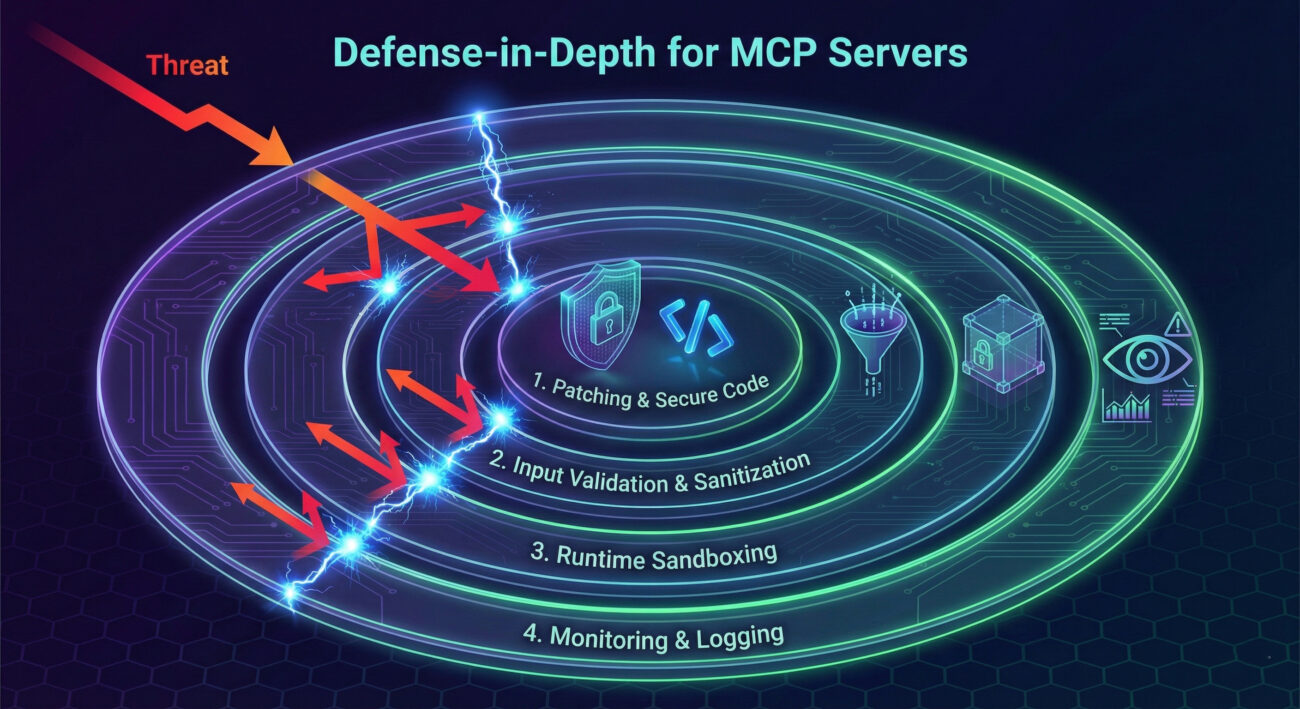

Blue Team (Defense) Perspective

Challenge & Strategy: Defending requires a multi-layered approach, as the attack vector (AI prompt) is non-traditional.

- Immediate Action: Update immediately to the patched versions (2025.9.25+ or 2025.12.18+) of

mcp-server-git. This is the most critical step. - Detection Strategy: Monitor for suspicious Git processes, especially those spawning shells or writing to

.git/configfiles outside of normal development activity. Implement strict allow-listing for MCP server capabilities where possible. - Architectural Defense: Run MCP servers in isolated, sandboxed containers with minimal filesystem permissions. Apply the principle of least privilege rigorously to the service account.

Mitigation Strategies & Best Practices

Addressing MCP server vulnerabilities requires more than just a patch. Here is a framework for building a resilient AI-integrated development environment.

1. Patching & Dependency Management

- Immediate Patching: Ensure

mcp-server-gitis updated to version 2025.12.18 or later. The patch removed the vulnerablegit_inittool and added robust input validation. - Automated Scanning: Integrate Software Composition Analysis (SCA) tools like OWASP Dependency-Track or Anchore Grype into CI/CD pipelines to flag vulnerable dependencies automatically.

2. Secure Development & Input Validation

- Never Trust Input: All user (or AI-model) input must be treated as hostile. Implement strict allow-listing for file paths and command arguments.

- Use Safe APIs: Instead of shelling out to

gitCLI, use secure native Git libraries (e.g.,pygit2for Python) that provide structured APIs, eliminating argument injection risks. - Context-Aware Sanitization: For MCP servers, validation must be context-aware. A path valid for a Git operation must also be checked against the server's configured root directory and normalized to prevent traversal.

3. Runtime Hardening & Isolation

- Sandboxing: Run MCP servers in isolated containers (e.g., Docker with read-only root filesystems) or virtual machines. Limit kernel capabilities (e.g., drop

CAP_DAC_OVERRIDE,CAP_SYS_ADMIN). - Least Privilege: Execute the server process under a dedicated, unprivileged user account with minimal filesystem permissions only to the necessary directories.

- Network Segmentation: Restrict the MCP server's network access. It typically does not need outgoing internet access.

Common Mistakes & Proactive Security Measures

🚫 Common Security Mistakes

- Treating AI Input as Trusted: Assuming prompts or data processed by an LLM are safe is a fatal error. The AI is just another (potently vulnerable) input channel.

- Direct CLI Command Concatenation: Building shell commands by string concatenation with user input is inherently risky. It is the root cause of CVE-2025-68144.

- Incomplete Path Validation: Only checking if a path "starts with" a safe prefix (as in CVE-2025-68145) is insufficient. Validation must resolve relative paths (like

../) and check the final canonical path. - Ignoring the Supply Chain: Overlooking the security of "helper" tools and dependencies, especially in novel ecosystems like MCP, creates massive blind spots.

✅ Proactive Security Measures

- Implement Comprehensive Input Sanitization: Use dedicated libraries for shell argument escaping and path normalization. For Python, consider

shlex.quote()andos.path.normpath()followed by prefix checking. - Conduct Threat Modeling for AI Integrations: Explicitly model the AI/LLM as a new, complex attack surface. Ask: "How could a malicious actor use the AI to manipulate each backend tool?"

- Adopt a Zero-Trust Architecture for MCP: Treat every MCP tool call as potentially hostile. Implement explicit, granular allow-lists for tools, arguments, and accessible file paths.

- Enable Extensive Audit Logging: Log all MCP tool invocations with full arguments and user context. This data is vital for detecting prompt injection attempts and post-incident analysis.

Frequently Asked Questions (FAQ)

Q1: I don't use Anthropic's Claude. Am I still affected by these MCP server vulnerabilities?

A: Potentially, yes. While the vulnerability was found in Anthropic's server, MCP is an open protocol. Any AI application (using ChatGPT, custom LLMs, etc.) that integrates the vulnerable mcp-server-git package is at risk. The key is the dependency, not the specific AI front-end.

Q2: The attack requires prompt injection. Isn't that the AI's problem, not the server's?

A: This is a critical misunderstanding. Defense-in-depth is paramount. While preventing prompt injection is important, backend systems must be resilient even if input is malicious. A backend tool should never allow arbitrary code execution because it received a bad instruction, this is the core lesson of these MCP server vulnerabilities.

Q3: What's the simplest first step I should take right now?

A: Update your dependencies. Run pip install --upgrade mcp-server-git (or equivalent) and verify you are on version 2025.12.18 or later. Then, audit your projects for direct or transitive dependencies on this package.

Q4: Where can I learn more about secure coding for MCP servers?

A: Start with the general OWASP Top Ten, focusing on Injection and Broken Access Control. For MCP-specific guidance, monitor Anthropic's official MCP documentation and the MITRE CWE listings for Path Traversal (CWE-22) and Command Injection (CWE-78).

Key Takeaways & Conclusion

The disclosure of these MCP server vulnerabilities is a watershed moment for AI security. It highlights that the integration of powerful LLMs with backend tooling creates a new and complex attack surface where traditional vulnerabilities can have exponentially greater impact.

- AI Amplifies Old Flaws: Classic vulnerabilities like Path Traversal and Command Injection become far more dangerous when exploitable via indirect, hard-to-monitor prompt injection attacks.

- Supply Chain Security is Non-Negotiable: The "reference implementation" of a critical protocol was vulnerable. This underscores the need for rigorous security reviews of all dependencies in the AI toolchain.

- Defense Requires a New Mindset: Defending AI-integrated systems requires extending security practices to encompass the LLM as a potential threat actor. Input validation, least privilege, and sandboxing are more critical than ever.

- Patching is Just the Start: Updating

mcp-server-gitcloses these specific holes, but the architectural lessons must be applied to all MCP servers and AI-backend integrations to prevent similar breaches.

By understanding the technical details of these exploits, mapping them to adversarial frameworks like MITRE ATT&CK, and implementing a layered defense strategy, security teams and developers can help secure the promising future of AI-augmented development.

Ready to Secure Your AI Integration?

Don't let your project be the next case study. Start by auditing your dependencies today.

Next Steps:

1. Scan your projects for mcp-server-git.

2. Enforce patching policies for all AI tooling dependencies.

3. Begin threat modeling sessions focused on AI-agent access to critical tools.

For continuous learning on cutting-edge cybersecurity threats and defenses, consider following resources like The Hacker News, the SANS Institute Blog, and the MITRE ATT&CK® knowledge base.

© 2026 Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.