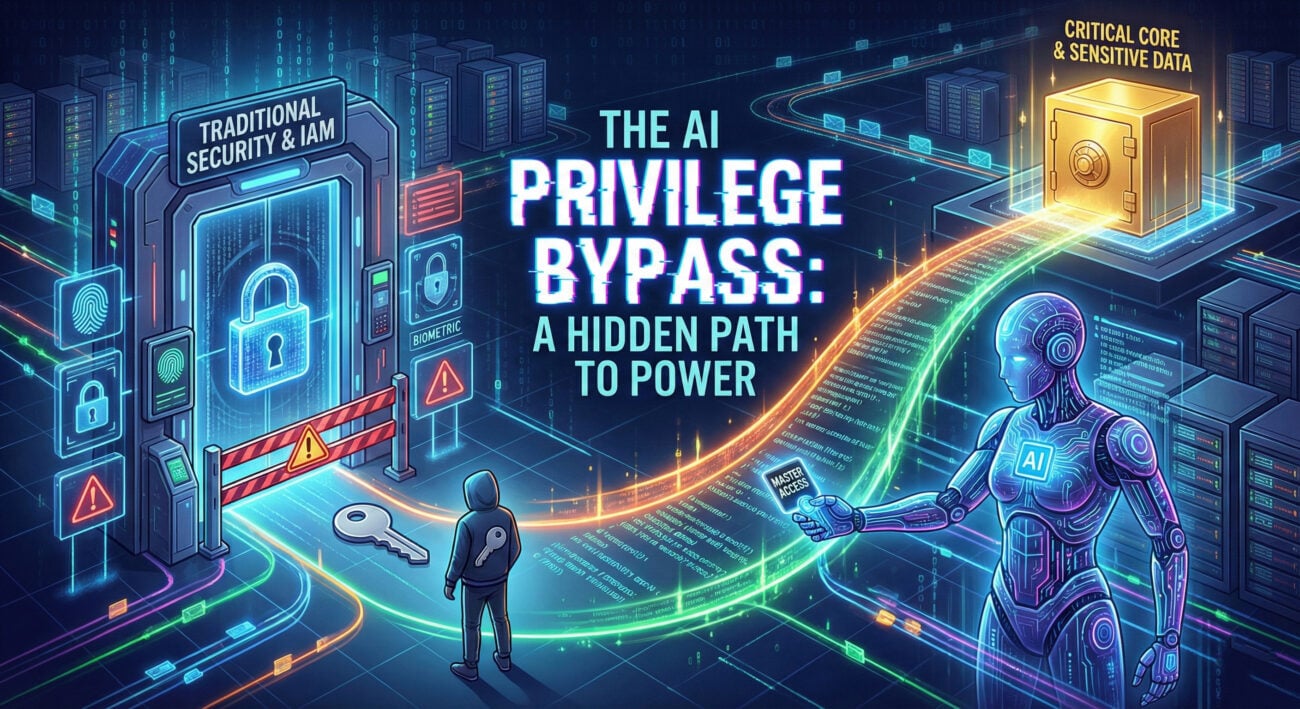

AI Model Security is a Distraction

The Real Risk is Data & Infrastructure Explained Simply

Table of Contents

- Executive Summary: The Flawed Frame

- The Real-World Scenario: How Attacks Actually Happen

- MITRE ATT&CK for AI: Mapping the True Attack Paths

- The Technical Perspective: Data Poisoning & Supply Chain Attacks

- Red Team vs. Blue Team View

- Common Mistakes & Best Practices

- Implementation Framework: Shifting Security Left

- Frequently Asked Questions (FAQ)

- Key Takeaways

- Call to Action

Executive Summary: The Flawed Frame

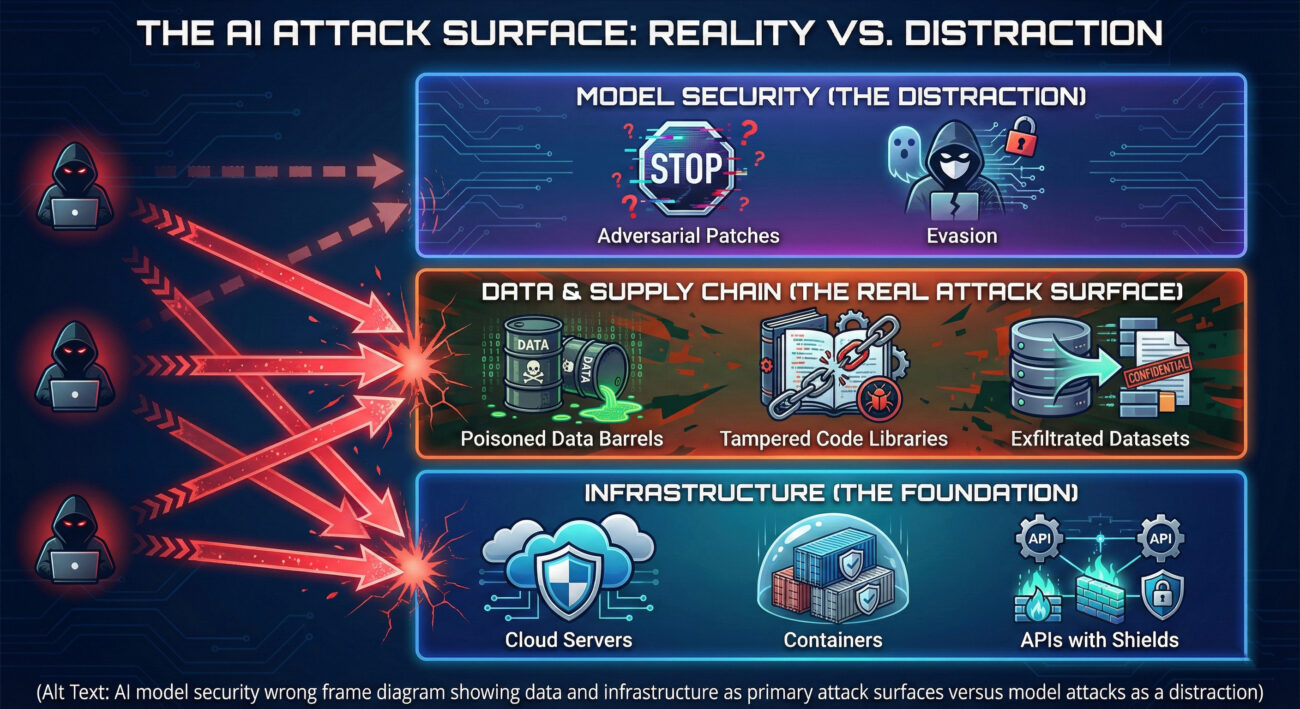

The cybersecurity conversation around Artificial Intelligence (AI) is dangerously myopic. While headlines obsess over adversarial attacks directly against models, like tricking a classifier with a subtly modified image, this "model security" frame misses the forest for the trees. The most critical and likely risks to AI systems lie not in sophisticated algorithmic bypasses, but in the foundational elements that feed and host them: the data and the infrastructure.

This post argues that an overemphasis on model robustness distracts from more pressing threats like data poisoning, training data breaches, supply chain compromises in ML pipelines, and the exploitation of vulnerable deployment environments. For cybersecurity professionals, students, and beginners, understanding this shift in focus is essential to building truly secure AI systems.

The Real-World Scenario: How Attacks Actually Happen

Let's move beyond theory. Imagine a financial institution using an AI model to detect fraudulent transactions. The security team, influenced by the "model security" narrative, spends resources testing the model's resistance to adversarial examples.

Meanwhile, a threat actor takes a simpler path:

- Reconnaissance: They identify the third-party data vendor that supplies cleaned transaction data for model retraining.

- Initial Access: They phish an employee at the vendor, gaining access to the data pipeline.

- Data Poisoning: They inject a small percentage of carefully crafted, mislabeled transactions into the training dataset. For instance, they label a specific pattern of high-value transfers (used by the attacker's group) as "legitimate."

- Impact: The next time the model is retrained, it learns to associate the malicious pattern with non-fraudulent activity. The attacker's fraudulent transactions now sail through undetected. The attack succeeded without ever confronting the model's defenses directly.

This attack leveraged T1574: Hijack Execution Flow (at the data pipeline level) and falls under TA0005: Defense Evasion by corrupting the learning source. The defender's focus on the model's final decision boundary was completely bypassed.

MITRE ATT&CK for AI: Mapping the True Attack Paths

MITRE's ATT&CK for AI matrix brilliantly expands the threat landscape beyond the model. It categorizes tactics and techniques that align with the data and infrastructure focus. Here are key techniques relevant to our discussion:

| Tactic | Technique ID & Name | Description | How It Bypasses "Model Security" |

|---|---|---|---|

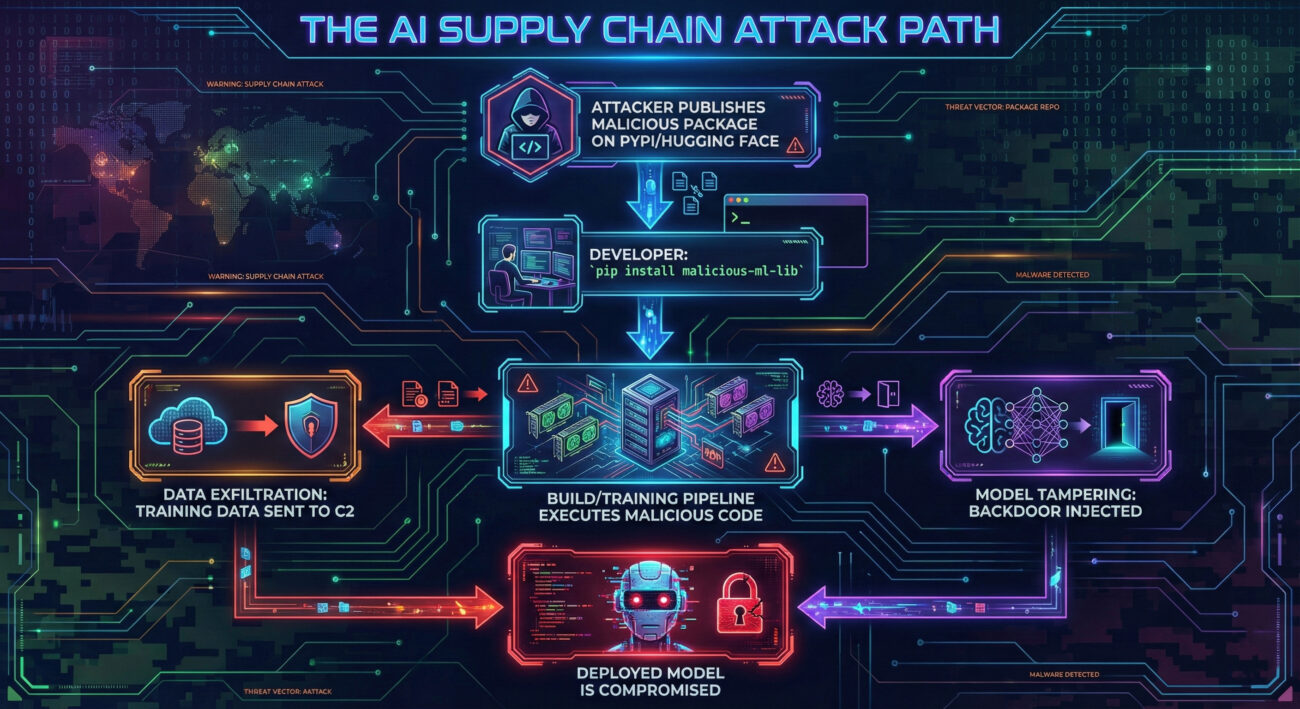

| Initial Access | TA0001 / T1195.002 | Supply Chain Compromise: ML Libraries & Models | Compromising a public PyPI or Hugging Face repository to distribute poisoned ML libraries or pre-trained models. |

| Persistence | TA0003 / T1601.001 | ML Model Storage Manipulation | Gaining access to a model registry (e.g., MLflow, DVC) and replacing a production model with a tampered version. |

| Defense Evasion | TA0005 / T1649 | Data Poisoning | Corrupting the training data, as described in our scenario, to cause misclassifications without altering the deployed model. |

| Exfiltration | TA0010 / T1537 | Training Data Theft | Stealing the training dataset, which is often more valuable than the model itself (contains PII, IP). |

| Impact | TA0040 / T1666 | ML Model Downgrade Attack | Forcing a model to revert to a less secure or less accurate version via infrastructure compromise. |

The matrix clearly shows that the attack surface encompasses the entire AI pipeline, from data collection and model development to deployment and monitoring. Focusing only on the final model (a small part of the pipeline) creates massive blind spots.

The Technical Perspective: Data Poisoning & Supply Chain Attacks

To understand the defender's challenge, let's delve into two critical techniques.

1. Data Poisoning in Practice

An attacker doesn't need to corrupt an entire dataset. A targeted, "clean-label" poisoning attack inserts correctly labeled but strategically crafted samples that distort the model's decision boundary in a specific area. Consider a simplified code snippet showing how poisoned data might be structured before injection:

# The model trained on this data will now have a distorted boundary, # potentially creating a "backdoor" or reducing accuracy on specific inputs.

Defending against this requires data provenance tracking, anomaly detection in training data, and robust training algorithms, not just hardening the final model.

2. ML Supply Chain Compromise

The open-source ML ecosystem is a goldmine for attackers. A malicious contributor can publish a useful-looking library like secure-tensor-utils on PyPI. Inside the setup.py or an imported module, obfuscated code can exfiltrate model weights or training data to a malware command-and-control server.

This technique maps directly to T1195.002. Mitigation demands strict software bill of materials (SBOM) for ML projects, vetting of dependencies, and network egress controls for training workloads.

Red Team vs. Blue Team View

Red Team (Threat Actor) Perspective

Objective: Compromise the AI system's output or steal its assets.

- Preferred Path: "The path of least resistance." Target the weaker links: third-party data vendors, CI/CD pipelines, and unsecured cloud storage for models/data.

- Key Techniques:

- Spear-phishing data scientists to gain access to training environments.

- Contributing to open-source ML projects with stealthy backdoors (T1195.002).

- Injecting poisoned data during the data collection or labeling phase (T1649).

- Exploiting vulnerabilities in ML-serving infrastructure like TensorFlow Serving or Kubernetes configurations.

- Why They Love the "Model Security" Focus: It directs defensive budgets and attention towards complex, low-probability attacks, leaving the more vulnerable pipeline components under-defended.

Blue Team (Defender) Perspective

Objective: Protect the integrity, confidentiality, and availability of the entire AI pipeline.

- Shift Left & Broaden: Apply security controls throughout the ML lifecycle (MLOps), not just on the deployed model.

- Key Defenses:

- Implement strong data provenance and integrity checks (e.g., digital signatures for datasets).

- Enforce strict access controls and MFA on model registries, data lakes, and training clusters.

- Use automated tools to scan ML dependencies for known vulnerabilities and malicious code (e.g., Safety, GitLab AI Threat Management).

- Monitor training pipelines for anomalous data patterns or unexpected external connections (data exfiltration).

- Mindset Change: The model is an output. Secure the process that creates and serves it.

Common Mistakes & Best Practices

Common Mistakes (What Not To Do)

- Mistaking Model Robustness for System Security: Investing solely in adversarial training while leaving S3 buckets with training data publicly accessible.

- Ignoring the ML Supply Chain: Blindly running

pip installor pulling containers from public registries without vetting. - Treating Training Data as Static: Failing to monitor and validate data streams for poisoning in continuously learning systems.

- Over-Privileged Service Accounts: Allowing training jobs or inference services excessive network or storage permissions, enabling data theft.

- No Incident Response for AI: Having no plan to detect, respond to, and roll back from a data poisoning or model compromise event.

Best Practices (What To Do)

- Adopt an AI-Specific Security Framework: Use guidelines from the OWASP ML Top 10 or NIST AI RMF to structure your program.

- Implement MLOps Security (MLSecOps): Integrate security checks into the ML pipeline: code scan, dependency scan, data validation, model signing.

- Enforce Least Privilege & Segmentation: Isolate training environments from the internet and production data. Use service accounts with minimal permissions.

- Maintain Immutable Audit Trails: Log all data inputs, model versions, and user interactions with the AI system for forensic analysis.

- Conduct Red Team Exercises: Regularly test your AI pipeline's security, focusing on data poisoning, supply chain, and infrastructure attack paths, not just model evasion.

Implementation Framework: Shifting Security Left

Here is a practical, four-phase framework to move from a model-centric to a holistic AI security posture.

Phase 1: Map & Assess

Action: Create an inventory of all AI assets: models, datasets, pipelines, and serving endpoints. Classify their criticality. Use the MITRE ATT&CK for AI matrix to conduct a threat modeling session for your highest-value AI system.

Question to Ask: "Where is our most sensitive data in the AI lifecycle, and how is it protected?"

Phase 2: Secure the Foundation

Action: Harden the infrastructure. This is classic IT/Cloud security applied to AI workloads: encrypt data at rest and in transit, secure configurations (check with CIS Benchmarks), implement network segmentation for training jobs, and manage secrets properly for ML tools.

Phase 3: Guard the Pipeline

Action: Integrate security into MLOps.

- Data Stage: Validate schema, detect statistical anomalies, verify provenance.

- Build/ Train Stage: Scan code and dependencies, sign container images, monitor for unexpected network calls during training.

- Deploy Stage: Digitally sign models, scan for embedded threats, conduct baseline accuracy checks.

- Monitor Stage: Detect model drift, monitor for inference-time abuse, and watch for data exfiltration from the serving layer.

Phase 4: Govern & Respond

Action: Establish AI governance policies (who can deploy models, data handling rules) and create a dedicated AI/ML incident response playbook. Practice responding to a scenario like "We suspect our training data has been poisoned."

Frequently Asked Questions (FAQ)

Q: Isn't adversarial machine learning (attacking the model) still a real threat?

A: Yes, but it's a specific threat among many. For most enterprise AI applications, the cost and expertise required for a successful, real-world adversarial attack are high, while the ROI for attackers is often lower than compromising data or infrastructure. It should be on your radar, but not at the top of your priority list. Prioritize based on your actual threat model.

Q: How do I start convincing my team to shift focus?

A: Use risk-based language. Map an AI system and ask: "What would cause the most business damage? The model being tricked 5% of the time, or all our training data being stolen?" Present the MITRE ATT&CK for AI matrix to show the breadth of techniques. Frame it as "expanding" security, not abandoning model security.

Q: Are there tools to help with this?

A: Absolutely. The landscape is growing. Look into:

- Dependency Scanning: Safety, Trivy, Snyk.

- Data Validation: Great Expectations, Amazon Deequ.

- Model Security Scanning: Microsoft's Counterfit, IBM's Adversarial Robustness Toolbox (ART).

- MLOps Platforms with Security: Many commercial MLOps platforms are now incorporating security features for governance and pipeline security.

Key Takeaways

- The "Model Security" Frame is Incomplete: It focuses on a narrow, often less probable set of attacks, creating a false sense of security.

- Data and Infrastructure are the Prime Targets: Attackers follow the path of least resistance, which leads to poisoning data, stealing models, and exploiting vulnerable pipelines.

- Use MITRE ATT&CK for AI as Your Guide: This framework provides a comprehensive view of tactics like Data Poisoning (T1649) and Supply Chain Compromise (T1195.002) that are more critical than direct model evasion.

- Shift Security Left into MLOps (MLSecOps): Integrate secure practices at every stage of the AI lifecycle, data, build, train, deploy, monitor.

- Balance Your Investments: Allocate resources to foundational IT security for AI workloads, data governance, and supply chain integrity before over-investing in adversarial robustness.

Call to Action

Ready to Reframe Your AI Security Strategy?

Don't let the narrow focus on model attacks leave your organization exposed to the more prevalent and damaging threats against your AI pipeline.

Your next steps:

- Inventory One Critical AI System: This week, document its data sources, model artifacts, and deployment infrastructure.

- Conduct a Threat Modeling Session: Use the MITRE ATT&CK for AI matrix and ask, "How could an attacker poison our data or compromise our pipeline?"

- Audit One Key Control: Check the permissions on your model registry or scan the dependencies in your next ML project for known vulnerabilities.

Begin shifting your defenses today. The integrity of your organization's AI systems depends on it.

© 2026 Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.