Chainlit AI Framework Vulnerabilities Expose Data to File Read and SSRF Attacks

The Silent Front-End Threat You Must Stop

The rapid adoption of AI frameworks like Chainlit is introducing familiar but dangerous security vulnerabilities into new, critical infrastructure. Recently discovered Chainlit vulnerabilities, tracked as CVE-2026-22218 and CVE-2026-22219, reveal how a popular tool for building conversational AI can be weaponized to steal sensitive files, cloud keys, and breach internal networks. This analysis breaks down the technical attack chain, maps it to the MITRE ATT&CK® framework, and provides a clear defensive roadmap for developers and security teams.

Table of Contents

- Executive Summary: The ChainLeak Vulnerabilities

- Technical Breakdown: How the Chainlit Vulnerabilities Work

- A Real-World Attack Scenario

- Mapping to MITRE ATT&CK: The Attacker's Playbook

- Red Team vs. Blue Team Perspective

- Step-by-Step: Understanding the Exploit Path

- Common Mistakes & Best Practices for AI Framework Security

- Frequently Asked Questions (FAQ)

- Key Takeaways & Call to Action

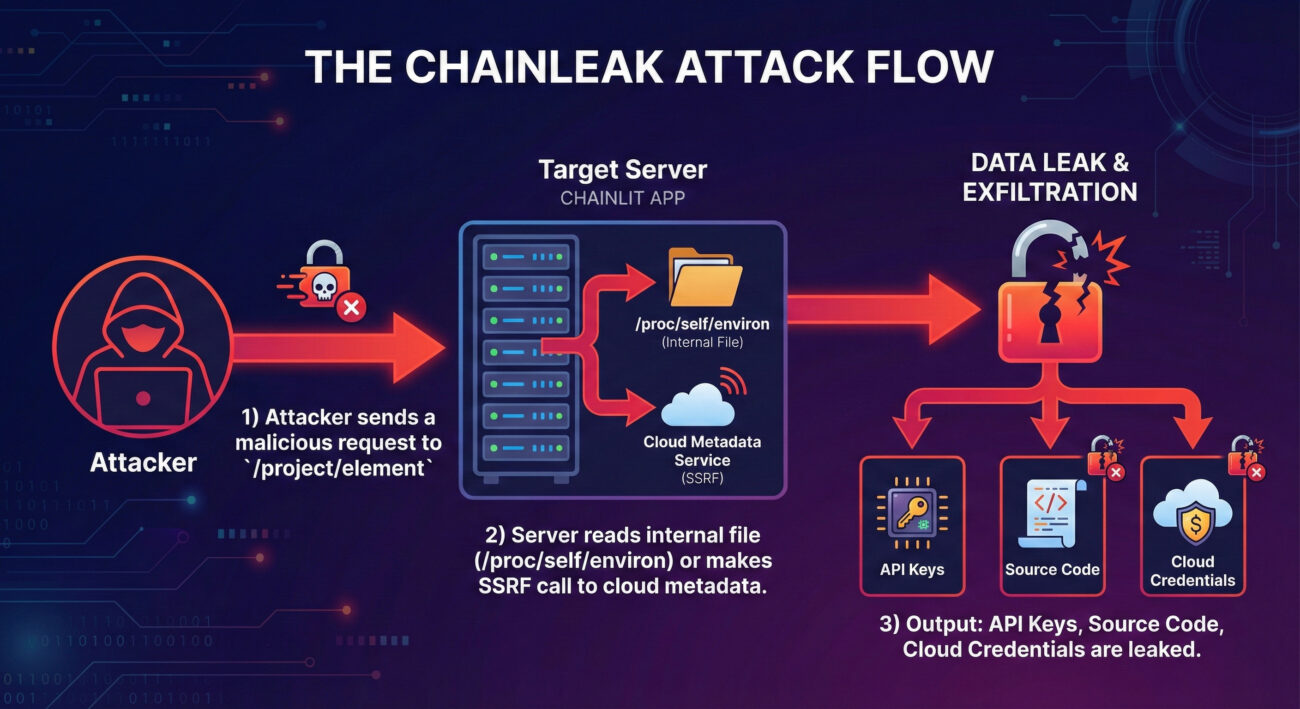

Executive Summary: The ChainLeak Vulnerabilities

In January 2026, security researchers at Zafran disclosed two high-severity flaws in the open-source Chainlit AI framework, collectively dubbed "ChainLeak." Chainlit, with over 7.3 million downloads, is used to create and deploy conversational chatbots. These Chainlit vulnerabilities create a perfect storm for data breach:

- CVE-2026-22218 (CVSS 7.1 - High): An arbitrary file read bug in the `/project/element` API endpoint. An authenticated attacker could read any file accessible by the server process.

- CVE-2026-22219 (CVSS 8.3 - High): A Server-Side Request Forgery (SSRF) vulnerability in the same endpoint when using the SQLAlchemy backend. It allows making arbitrary HTTP requests from the server, potentially accessing internal cloud metadata services.

When combined, these flaws allow an attacker to move from a limited application attack to full-scale compromise of the hosting environment, leading to lateral movement and massive data exfiltration. The vulnerabilities were patched in Chainlit version 2.9.4, released in December 2025.

Technical Breakdown: How the Chainlit Vulnerabilities Work

To defend effectively, you must understand the attack mechanics. Let's dissect each CVE.

CVE-2026-22218: The Arbitrary File Read

The flaw existed in the file upload logic for chat "elements" (like images, PDFs). The endpoint failed to properly validate user-controlled input that specified file paths. An attacker could manipulate a request to point to a sensitive system file instead of a uploaded file.

Technical Impact: By submitting a path like ../../../../proc/self/environ, an attacker could read the server's environment variables. This file is a goldmine, often containing:

- Database connection strings and credentials (

DATABASE_URL) - Third-party API keys (OpenAI, AWS, etc.)

- Internal configuration secrets and cryptographic keys

- Application source code paths

CVE-2026-22219: The SSRF Gateway

This vulnerability was triggered when Chainlit was configured to use SQLAlchemy with a database like PostgreSQL. The flaw allowed an attacker to inject a URL into a parameter that the server would then fetch. Crucially, this request originated from the Chainlit server itself, bypassing network firewalls that might block external traffic.

Prime Target: Cloud Metadata Services. In cloud environments like AWS, a special internal endpoint (169.254.169.254) provides credentials for the instance's assigned role. By exploiting this SSRF, an attacker could steal these cloud IAM credentials, granting them permissions to other services (S3 buckets, databases) within the cloud account, enabling catastrophic lateral movement.

A Real-World Attack Scenario

Imagine "TechCorp," which uses a Chainlit-powered internal AI assistant to help employees query company documentation. The app runs on an AWS EC2 instance.

- Initial Foothold: An attacker, perhaps a malicious insider or someone who phished low-level credentials, gains access to a user account on the AI chatbot.

- File Read Exploit: They use CVE-2026-22218 to read

/proc/self/environ. They extract theAWS_ACCESS_KEY_IDand a path to the application source code. - Source Code Analysis: They then read the application's source code, discovering database credentials and the fact it uses SQLAlchemy (enabling the SSRF flaw).

- SSRF Escalation: They weaponize CVE-2026-22219, forcing the server to call the AWS Instance Metadata Service (IMDSv1). The server retrieves temporary cloud role credentials with far greater privileges than the original user.

- Lateral Movement & Breach: Using these stolen cloud keys, the attacker accesses TechCorp's internal S3 buckets containing customer data and production databases, culminating in a full-scale data breach.

Mapping to MITRE ATT&CK: The Attacker's Playbook

Understanding these Chainlit vulnerabilities within the MITRE ATT&CK framework helps defenders recognize the tactics and plan detection strategies.

| MITRE ATT&CK Tactic | Technique (ID) | How ChainLeak is Utilized |

|---|---|---|

| Initial Access | Valid Accounts (T1078) | The attack requires an authenticated session, exploiting legitimate user credentials. |

| Discovery | File and Directory Discovery (T1083), Cloud Infrastructure Discovery (T1580) | Reading /proc/self/environ and source code discovers secrets and configs. SSRF queries cloud metadata to discover IAM role info. |

| Credential Access | Unsecured Credentials (T1552), Cloud Instance Metadata API (T1552.005) | The core impact: stealing API keys from environment variables and cloud IAM roles via the metadata API attack. |

| Lateral Movement | Use Alternate Authentication Material (T1550) | Stolen cloud credentials are used to move from the compromised app server to other services (S3, RDS) within the cloud environment. |

| Exfiltration | Exfiltration Over Web Service (T1567) | Sensitive data from internal files and cloud services is transmitted back to the attacker. |

Red Team vs. Blue Team Perspective

Red Team (Attack) View

Objective: Leverage the app to gain cloud access and exfiltrate data.

- Reconnaissance: Identify the app as Chainlit-based. Enumerate endpoints.

- Initial Exploit: Use a low-privilege account to trigger the file read (CVE-2026-22218). Target

/proc/self/environfirst for quick wins. - Privilege Escalation: If SQLAlchemy is in use, pivot to the SSRF (CVE-2026-22219). Target the cloud metadata endpoint to steal IAM role credentials.

- Persistence & Exfiltration: Use stolen cloud keys via AWS CLI/SDK to explore and copy data from other services, establishing a backdoor.

Blue Team (Defense) View

Objective: Detect and prevent exploitation, limit damage.

- Prevention: Immediately update to Chainlit >=2.9.4. Enforce IMDSv2 on all cloud instances. Implement strict input validation and allow-listing for file operations.

- Detection: Monitor logs for abnormal file paths accessed via the

/project/elementendpoint. Set alerts for outbound requests from the app server to internal metadata IP (169.254.169.254). - Containment: Run the Chainlit app with a low-privilege, dedicated system user. Apply network segmentation and egress filtering to block the app server from accessing metadata and other internal services.

- Incident Response: Have a playbook ready for credential rotation (especially cloud IAM keys and database passwords) if exploitation is suspected.

Step-by-Step: Understanding the Exploit Path

Here is a simplified technical walkthrough of how an attacker might chain these Chainlit vulnerabilities together.

Step 1: Gaining a Foothold & Discovery

The attacker already has a valid session cookie. They probe the application and discover the /project/element endpoint used for uploading files. They intercept a legitimate request and analyze its structure.

Step 2: Exploiting the File Read (CVE-2026-22218)

They modify the POST request to the vulnerable endpoint, changing a file path parameter to traverse to a sensitive location. For example:

POST /project/element HTTP/1.1

Host: vulnerable-ai-app.com

... (session cookies) ...

{

"file_path": "../../../proc/self/environ", // Malicious path traversal

"element_id": "attacker_controlled_id"

}

The server, lacking validation, reads the environment file and includes its contents in the response, leaking secrets.

Step 3: Pivoting to SSRF (CVE-2026-22219)

From the leaked data, the attacker confirms the use of SQLAlchemy. They craft a new request that injects a URL pointing to the cloud metadata service.

{

"data_source": "http://169.254.169.254/latest/meta-data/iam/security-credentials/",

"action": "update_element"

}

The Chainlit server makes this request and returns the IAM role name, which the attacker then queries further to get temporary access keys.

Common Mistakes & Best Practices for AI Framework Security

The Chainlit vulnerabilities stem from classic security failures. Here’s what to avoid and what to implement.

Common Mistakes (The "Don'ts")

- Trusting User-Controlled Input: Directly using user-provided data for file paths or URLs without strict validation.

- Over-Permissive Service Accounts: Running the application with high system/cloud privileges, amplifying the impact of any breach.

- Using Outdated Metadata Services: Relying on AWS IMDSv1, which is vulnerable to simple SSRF, instead of enforcing IMDSv2.

- Secret Management in Environment Variables: Storing high-value secrets in environment variables, which are easily leaked via file read bugs.

- Lack of Network Segmentation: Allowing application servers unrestricted network access to internal metadata and database services.

Best Practices (The "Dos")

- Implement Strict Input Validation: Use allow-lists for expected file names and sanitize all user inputs. Reject paths containing

..,/proc, or URLs with internal IP ranges. - Adopt a Principle of Least Privilege: Run apps with a dedicated, low-privilege user. In the cloud, assign minimal IAM roles with only necessary permissions.

- Enforce IMDSv2 and Block Private IPs: Update cloud instances to use IMDSv2 (which requires a token). Configure network rules to block egress from apps to metadata IPs.

- Use a Dedicated Secrets Manager: Store API keys and database credentials in a secure service like AWS Secrets Manager or HashiCorp Vault, not in environment variables.

- Apply Regular Updates & Security Scans: Update dependencies like Chainlit promptly. Use SAST/DAST tools to scan for vulnerabilities in custom code and frameworks.

Frequently Asked Questions (FAQ)

Q1: I'm using Chainlit version 2.8. Am I definitely vulnerable?

Yes, if you are using a version prior to 2.9.4, your application is vulnerable to these specific CVEs. You should plan an immediate upgrade.

Q2: Can these vulnerabilities be exploited remotely without any user account?

No. Both flaws require an authenticated session. This highlights the critical importance of strong authentication controls and monitoring user accounts for compromise.

Q3: Besides updating, what's the single most important mitigation?

For cloud deployments, enforcing IMDSv2 is a critical, network-level mitigation that can break the SSRF exploit chain even if the application flaw is unpatched. AWS provides tools to enforce this at the account level.

Q4: Are other AI frameworks vulnerable to similar issues?

Absolutely. The disclosure coincided with a similar SSRF flaw in Microsoft's MarkItDown MCP server. The pattern is clear: as AI frameworks integrate diverse data sources (files, URLs, APIs), they create new surfaces for classic vulnerabilities like SSRF and Path Traversal. A proactive security review of any AI framework is essential.

Q5: Where can I learn more about secure development for AI applications?

Start with the OWASP Top 10 for LLM Applications. For cloud security, the AWS Security Best Practices and Google Cloud Security Foundations Guide are excellent resources. The MITRE ATT&CK Navigator is also invaluable for understanding adversary behavior.

Key Takeaways & Call to Action

The Chainlit vulnerabilities serve as a powerful case study: AI innovation does not negate classical security risks. Frameworks that handle files and network requests are prime targets for well-known attack techniques.

- Update Immediately: If you use Chainlit, confirm you are on version 2.9.4 or higher.

- Harden Your Cloud: Enforce IMDSv2 and apply the principle of least privilege to all service roles.

- Validate Everything: Treat all user input in your AI applications as untrusted and malicious.

- Shift Security Left: Integrate security testing (SAST, SCA) early in the development lifecycle of AI projects.

Ready to Secure Your AI Applications?

Don't let your innovative AI projects become the weakest link in your security chain. Begin with these actionable steps today.

1. Audit: Inventory all your applications using AI frameworks like Chainlit.

2. Patch: Prioritize updating to the latest secure versions.

3. Harden: Implement the network and cloud controls discussed above.

For ongoing insights into cybersecurity threats and defenses, explore more resources on our site or follow trusted sources like the CISA Secure Our World campaign.

© Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.

Latest News

- All Posts

- News