Critical ServiceNow AI Vulnerability

Patch Immediately to Prevent Remote Code Execution Explained Simply

In January 2026, ServiceNow disclosed a critical vulnerability in its AI Platform that sent shockwaves through the cybersecurity community. This vulnerability, if exploited, could allow attackers to execute arbitrary code remotely on affected systems, potentially compromising enterprise data and operations. For cybersecurity professionals and beginners alike, understanding this ServiceNow AI Platform vulnerability is crucial for protecting organizational assets in an increasingly AI-integrated world.

Table of Contents

- Executive Summary: The Gravity of the Situation

- Technical Deep Dive: How the Vulnerability Works

- Real-World Attack Scenario

- MITRE ATT&CK Technique Mapping

- Red Team vs Blue Team Perspectives

- Step-by-Step Patching and Mitigation Guide

- Common Mistakes & AI Security Best Practices

- Implementation Framework for AI Security

- Frequently Asked Questions

- Key Takeaways

- Call to Action

Executive Summary: The Gravity of the Situation

The recently patched ServiceNow AI Platform vulnerability represents a significant threat to organizations using ServiceNow's AI capabilities for IT service management, customer service, and operational workflows. Rated as critical with a CVSS score likely exceeding 9.0, this vulnerability affects the AI Search and Conversation components of the ServiceNow Platform, specifically within the Now Intelligence suite.

What makes this vulnerability particularly concerning is its potential for remote code execution (RCE), which would allow an authenticated attacker to execute arbitrary commands on the underlying infrastructure. Given ServiceNow's central role in enterprise operations, a successful exploit could lead to data breach, service disruption, and lateral movement through corporate networks.

ServiceNow has released patches for all affected versions and strongly recommends immediate updating. For cybersecurity beginners, this incident highlights the critical importance of patch management in AI-integrated systems and understanding how attack surfaces expand with new technologies.

Technical Deep Dive: How the ServiceNow AI Vulnerability Works

To understand this ServiceNow AI vulnerability, we need to examine the technical mechanics behind the exploit. The vulnerability resides in how the AI Platform processes certain types of inputs within conversational AI and search functionalities.

The Root Cause: Input Validation Failure

The core issue is an input validation failure in AI-generated query processing. When the ServiceNow AI components handle specially crafted requests, they fail to properly sanitize user-supplied data that gets passed to backend systems. This creates an injection vector similar to traditional SQL injection but within the AI processing pipeline.

Technically speaking, the vulnerability allows an authenticated user (with appropriate application permissions) to inject malicious payloads through:

- AI-powered search queries

- Conversational AI interaction inputs

- Custom workflow inputs that leverage AI capabilities

- Integration points with external AI services

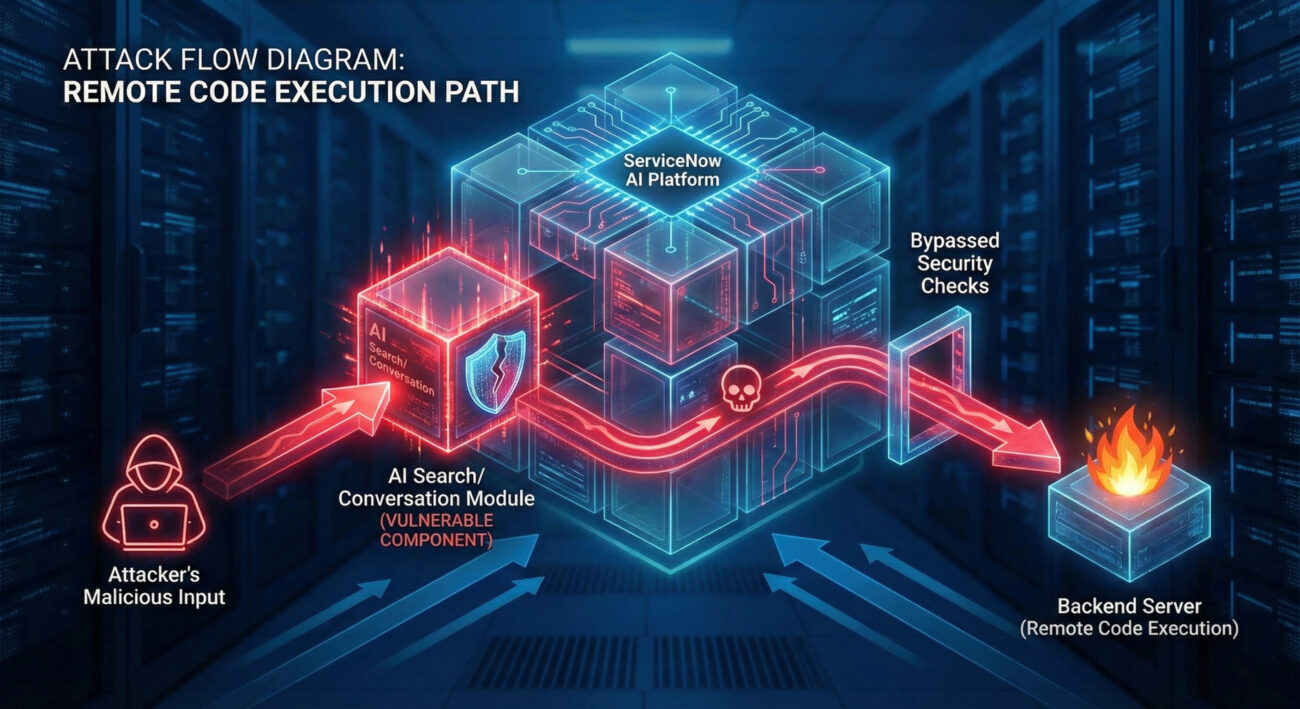

The Exploit Chain

The exploit follows this technical chain:

Step 1: Malicious Input Crafting

An attacker crafts a specially formatted input that appears legitimate to the AI component but contains hidden command sequences or escape characters designed to break out of the intended processing context.

Step 2: Insufficient Sanitization

The ServiceNow AI processing engine fails to properly sanitize this input, allowing the malicious payload to pass through to backend processing functions without adequate validation.

Step 3: Context Escape and Execution

The payload escapes its intended context and gets interpreted as executable code by underlying system components, leading to arbitrary command execution on the ServiceNow instance or connected systems.

User Input: "Search for user data"; {malicious_code: "system('cat /etc/passwd')"}

// The AI processor might incorrectly parse this as:

1. Legitimate search query: "Search for user data"

2. Executable code: system('cat /etc/passwd')

Real-World Attack Scenario: What Could Happen?

Let's imagine a realistic scenario where this ServiceNow AI vulnerability gets exploited in a corporate environment:

Acme Corporation uses ServiceNow for IT service management, with AI-powered chatbots handling employee IT support requests. An attacker who has obtained employee credentials (through phishing or other means) accesses the ServiceNow portal.

Instead of asking normal questions like "How do I reset my password?", the attacker crafts a malicious query to the AI chatbot: "Generate a report for all system users" combined with hidden escape sequences that trigger command execution.

The vulnerable AI component processes this input, and the malicious payload executes, allowing the attacker to:

- Extract sensitive employee data from the database

- Create backdoor administrator accounts

- Access connected systems through ServiceNow integrations

- Deploy malware or ransomware across the enterprise

MITRE ATT&CK Technique Mapping

Understanding this ServiceNow AI vulnerability through the MITRE ATT&CK framework helps security teams identify detection and prevention opportunities:

| MITRE ATT&CK Tactic | Technique ID | Technique Name | Application to This Vulnerability |

|---|---|---|---|

| Initial Access | T1078 | Valid Accounts | Attackers need authenticated access to ServiceNow |

| Execution | T1059 | Command and Scripting Interpreter | Vulnerability allows arbitrary command execution |

| Persistence | T1136 | Create Account | Could create backdoor admin accounts |

| Privilege Escalation | T1068 | Exploitation for Privilege Escalation | Could elevate from user to system-level privileges |

| Lateral Movement | T1021 | Remote Services | Could move to connected systems via ServiceNow |

| Exfiltration | T1041 | Exfiltration Over Command and Control | Data theft through executed commands |

For blue teams, monitoring for these techniques, especially unusual command execution from ServiceNow components, can help detect exploitation attempts even before patches are applied.

Red Team vs Blue Team Perspectives

Red Team (Attack) Perspective

From an attacker's viewpoint, this ServiceNow AI vulnerability presents a golden opportunity:

- High-value target: ServiceNow often contains sensitive operational data

- Centralized access: Compromising ServiceNow can provide access to connected systems

- Stealth advantage: Malicious activity might blend with legitimate AI queries

- Persistence potential: Can establish backdoors that survive normal maintenance

A sophisticated attacker would:

- Conduct reconnaissance to identify ServiceNow instances

- Obtain credentials through phishing or credential stuffing

- Craft AI queries that appear normal but contain payloads

- Use the initial access for lateral movement and data exfiltration

Blue Team (Defense) Perspective

Defenders must prioritize patch management and detection strategies:

- Immediate patching: Apply ServiceNow patches as emergency changes

- Input validation: Implement additional input sanitization layers

- Monitoring: Watch for unusual command execution from ServiceNow

- Least privilege: Restrict ServiceNow integration account permissions

Effective defense includes:

- Maintaining an updated asset inventory of all ServiceNow instances

- Implementing strong authentication and MFA

- Creating detection rules for suspicious AI query patterns

- Conducting regular vulnerability assessments of AI components

Step-by-Step Patching and Mitigation Guide

If your organization uses ServiceNow with AI capabilities, follow this structured approach to address this ServiceNow AI vulnerability:

Step 1: Identify Affected Systems

Inventory all ServiceNow instances in your environment. Check version numbers and determine which utilize AI capabilities (Now Intelligence, AI Search, Virtual Agent). Document instance URLs, administrators, and business criticality.

Step 2: Apply Official Patches

Download and apply the official ServiceNow patches for your specific release. Follow ServiceNow's patch documentation carefully. Test in a non-production environment first if possible.

Step 3: Implement Compensating Controls

If immediate patching isn't possible, implement temporary controls:

- Restrict AI functionality to essential users only

- Implement web application firewall (WAF) rules to block suspicious patterns

- Increase monitoring of AI component logs

- Consider disabling non-critical AI features temporarily

Step 4: Verify and Validate

After patching, verify the fix:

- Test AI functionality to ensure it still works correctly

- Attempt to replicate the exploit (in a controlled environment) to confirm patching

- Check system logs for any residual suspicious activity

- Update your vulnerability management system to reflect the patched status

Step 5: Continuous Monitoring

Establish ongoing monitoring for similar vulnerabilities:

- Subscribe to ServiceNow security bulletins

- Implement regular vulnerability scanning of ServiceNow instances

- Create SIEM alerts for unusual AI query patterns or command execution

- Conduct periodic red team exercises focusing on AI components

Common Mistakes & AI Security Best Practices

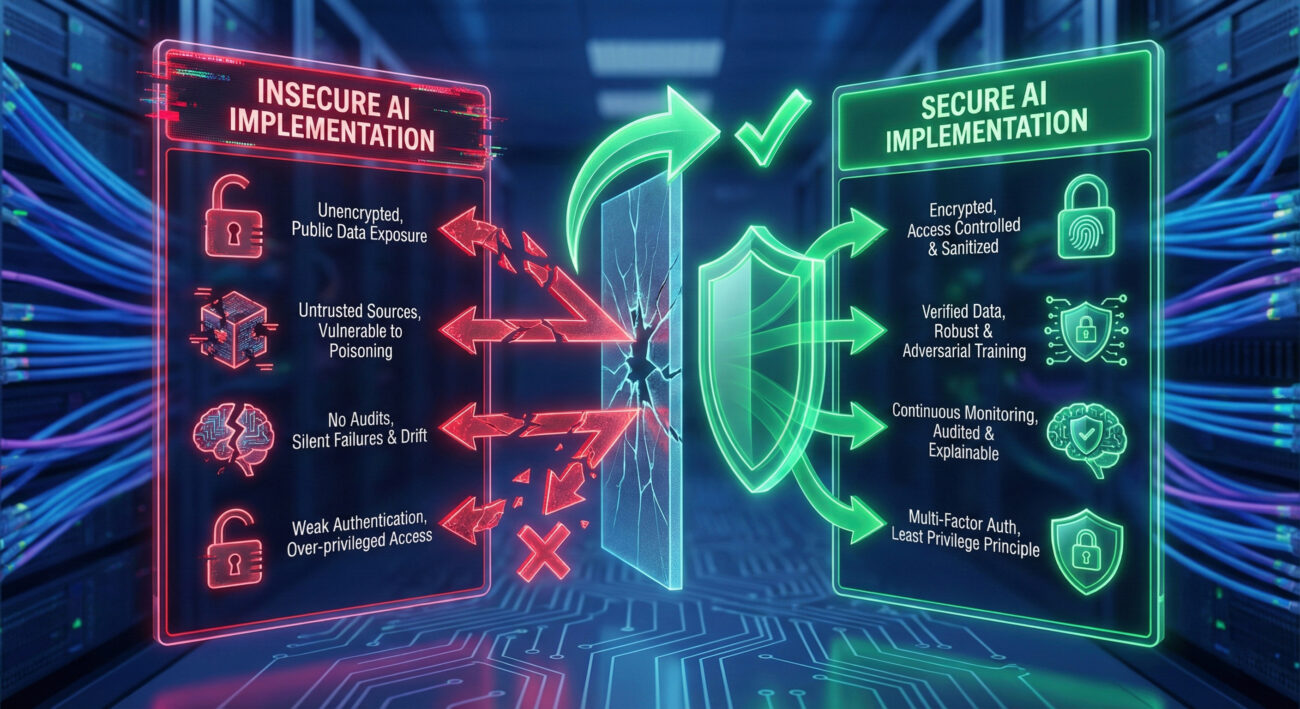

Common Security Mistakes with AI Platforms

- Assuming AI is inherently secure: Treating AI components as "magic" without proper security assessment

- Overprivileged AI service accounts: Giving AI components excessive system permissions

- Neglecting AI-specific patching: Focusing only on core platform updates while ignoring AI module patches

- Insufficient input validation: Trusting AI to handle all input sanitization automatically

- Lack of AI activity monitoring: Not logging or reviewing AI interactions for anomalies

AI Security Best Practices

- Apply the principle of least privilege: Restrict AI component permissions to only what's necessary

- Implement defense in depth: Use multiple security layers around AI systems

- Regular AI security assessments: Include AI components in penetration testing and code reviews

- Secure AI training data: Protect the data used to train and fine-tune AI models

- Monitor for model poisoning: Watch for attempts to corrupt AI behavior through malicious inputs

- Maintain an AI asset inventory: Know all AI components in your environment and their risk profiles

Implementation Framework for AI Security

Based on this ServiceNow AI vulnerability incident, organizations should adopt a structured AI security framework:

| Framework Component | Description | Implementation Steps |

|---|---|---|

| AI Governance | Policies and oversight for AI security | 1. Establish AI security policy 2. Define AI risk assessment process 3. Assign AI security responsibilities |

| AI Security Testing | Regular assessment of AI components | 1. Include AI in penetration testing 2. Conduct adversarial ML testing 3. Perform AI code security reviews |

| AI Monitoring | Continuous oversight of AI operations | 1. Log all AI interactions 2. Monitor for anomalous patterns 3. Implement AI-specific alerts |

| AI Incident Response | Preparedness for AI security incidents | 1. Create AI incident response plan 2. Train team on AI incident handling 3. Conduct AI breach simulations |

| AI Patch Management | Systematic updating of AI components | 1. Maintain AI component inventory 2. Subscribe to AI security alerts 3. Establish AI patching SLAs |

For further reading on AI security frameworks, consult these resources:

- NIST AI Risk Management Framework - Comprehensive guidance from the National Institute of Standards and Technology

- OWASP ML Security Top 10 - Community-driven list of critical ML security risks

- Google's AI Security Best Practices - Practical guidance for securing AI systems

- Microsoft AI Security Resources - Enterprise-focused AI security guidance

- CISA AI Security Initiative - Government resources on AI security

Frequently Asked Questions (FAQ)

Q: How do I know if my ServiceNow instance is affected by this AI vulnerability?

A: Check your ServiceNow version and installed plugins. If you're using ServiceNow's AI capabilities (Now Intelligence, AI Search, Virtual Agent with AI features), you're likely affected. ServiceNow has released specific patch advisories with affected version ranges.

Q: Can this vulnerability be exploited without authentication?

A: Based on available information, the attacker needs authenticated access to the ServiceNow instance. This highlights the importance of strong authentication controls and monitoring for credential compromise.

Q: What's the difference between traditional software vulnerabilities and AI-specific vulnerabilities?

A: Traditional vulnerabilities often involve memory corruption or logic errors. AI vulnerabilities frequently involve data poisoning, model manipulation, or input handling issues specific to how AI processes information. This ServiceNow AI vulnerability represents a hybrid - an input validation issue in AI components.

Q: How can beginners start learning about AI security?

A: Start with foundational cybersecurity knowledge, then explore AI/ML concepts. Practical steps include: 1) Take introductory cybersecurity courses, 2) Learn basic AI/ML principles, 3) Practice with AI security tools like IBM's Adversarial Robustness Toolbox, 4) Follow AI security researchers and communities.

Q: Are there tools to scan for AI vulnerabilities?

A: Yes, emerging tools include: 1) Microsoft's Responsible AI Toolbox, 2) IBM's AI Explainability 360, 3) Commercial AI security platforms from vendors like HiddenLayer and Robust Intelligence. However, traditional vulnerability scanners may not detect AI-specific issues.

Key Takeaways

1. AI Systems Expand Attack Surfaces: The integration of AI capabilities into platforms like ServiceNow creates new vulnerability vectors that require specific security attention.

2. Patch Management is Non-Negotiable: This ServiceNow AI vulnerability underscores the critical importance of timely patching, especially for AI components that might be overlooked in standard update processes.

3. Authentication Alone Isn't Enough: While authentication is required for this exploit, it's not sufficient protection. Defense in depth with input validation, monitoring, and least privilege is essential.

4. AI Security Requires Specialized Knowledge: Protecting AI systems requires understanding both traditional security principles and AI-specific risks like data poisoning, model inversion, and adversarial examples.

5. Proactive AI Security Posture: Organizations should establish AI security frameworks before incidents occur, including governance, testing, monitoring, and incident response specific to AI systems.

Call to Action: Secure Your AI Systems Today

Don't Wait for an AI Security Breach

This ServiceNow AI vulnerability serves as a wake-up call for all organizations using AI technologies. Take these immediate actions:

- Patch affected ServiceNow instances immediately

- Conduct an inventory of all AI systems in your environment

- Review and strengthen AI security controls

- Educate your team on AI security risks and best practices

For cybersecurity beginners, this incident represents both a warning and an opportunity. AI security expertise is becoming increasingly valuable. Start your learning journey today by exploring the resources mentioned in this article and considering specialized training in AI security.

Remember: In cybersecurity, being proactive about secure practices is always better than reacting to a breach.

© 2026 Cyber Pulse Academy. This content is provided for educational purposes only.

Always consult with security professionals for organization-specific guidance.