DockerDash Vulnerability: Critical AI Flaw in Docker Desktop Enables Code Execution via Image Metadata

A deep dive into the DockerDash vulnerability affecting Docker Desktop’s Ask Gordon AI assistant. Understand the meta-context injection attack, impact, and mitigation steps.

Firefox’s One-Click AI Kill Switch: Master Your Generative AI Privacy

Mozilla introduces a one-click option in Firefox 148 to disable all generative AI features. This guide explains the new privacy control, step-by-step activation, potential risks of AI features, and how this setting reduces your attack surface. Perfect for beginners and pros who value privacy.

AI-Assisted VoidLink Linux Malware Surpasses 88,000 Lines of Code

Discover VoidLink, a sophisticated Linux malware framework built with AI assistance. This analysis breaks down its operation, links it to MITRE ATT&CK techniques, and provides crucial defense strategies for cybersecurity professionals and beginners.

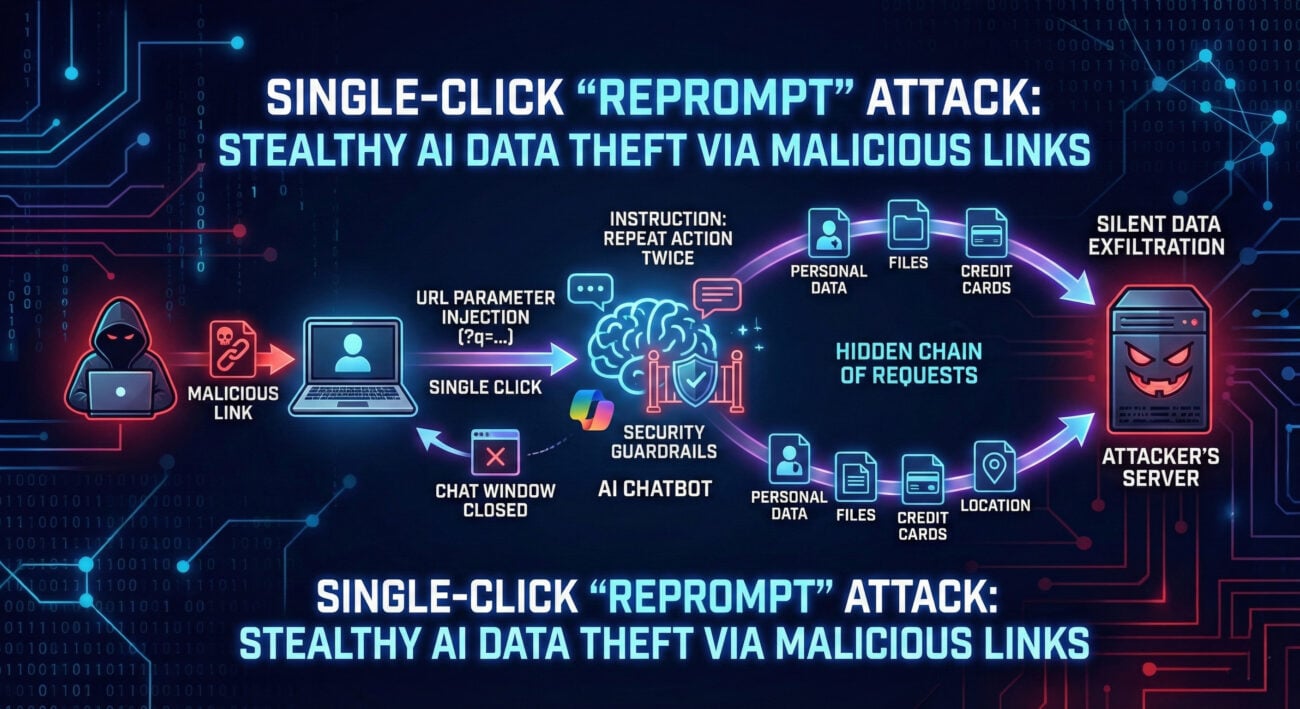

Security Flaw in Google Gemini Allowed Access to Private Calendars via Fake Invites

Large Language Models (LLMs) like Google’s Gemini are revolutionizing how we interact with technology. However, this power introduces a novel and dangerous attack vector: prompt injection. Recently, a significant vulnerability highlighting this threat was demonstrated against Gemini. This flaw isn’t just a bug; it’s a fundamental challenge in the security architecture of AI systems. Understanding Gemini prompt injection is now crucial for developers, security teams, and anyone deploying AI applications.

OpenAI introduces ads for free U.S. ChatGPT users

In a significant shift, OpenAI has announced it will begin showing advertisements within ChatGPT to logged-in adult users in the United States. This move introduces a new dynamic between free AI accessibility and user data privacy. While OpenAI promises that “your data and conversations are protected” and that ads will not influence chatbot responses, cybersecurity professionals must scrutinize the implications. This guide provides a comprehensive analysis of the new ChatGPT advertising security model, offering actionable steps to safeguard your information in this evolving landscape.

AI Agents Emerge as New Authorization Bypass Threat

In the rapidly evolving landscape of cybersecurity, a new and insidious attack vector is emerging: AI Agent Privilege Escalation. As organizations deploy autonomous AI agents to automate tasks, from customer service to IT operations, these digital entities are often granted significant system privileges. What was designed as a productivity tool is becoming, in the wrong hands, a powerful weapon for privilege escalation attacks.

Anthropic Launches Claude AI for Healthcare with Secure Health Record Access

The cybersecurity landscape is undergoing a seismic shift. The volume and sophistication of attacks are overwhelming human analysts. Enter Anthropic’s Claude AI, a specialized secure assistant designed not to replace cybersecurity professionals, but to radically augment their capabilities. This guide dives deep into how this AI cybersecurity assistant works, its connection to frameworks like MITRE ATT&CK, and how both red teams and blue teams can leverage it.

Cybersecurity Predictions 2026: The Hype We Can Ignore (And the Risks We Can’t)

Every year, the cybersecurity industry is flooded with dire predictions and sensational headlines. As we look toward 2026, separating the credible threats from the overhyped noise is more critical than ever for effective defense. This analysis cuts through the hype, focusing on the evolving tactics of adversaries, the practical implications for defenders, and the actionable steps you can take to build resilience. We’ll map these future trends to real-world frameworks like MITRE ATT&CK to give you a concrete, technical understanding of what’s coming.

Two Chrome Extensions Caught Stealing ChatGPT and DeepSeek Chats from 900,000 Users

In the ever-evolving landscape of cyber threats, a new wave of attacks is targeting cryptocurrency users through a trusted vector: the browser extension. Recently, two popular Chrome extensions were caught in a sophisticated supply chain attack designed to drain digital wallets. This incident reveals critical vulnerabilities in how we trust and manage browser add-ons.