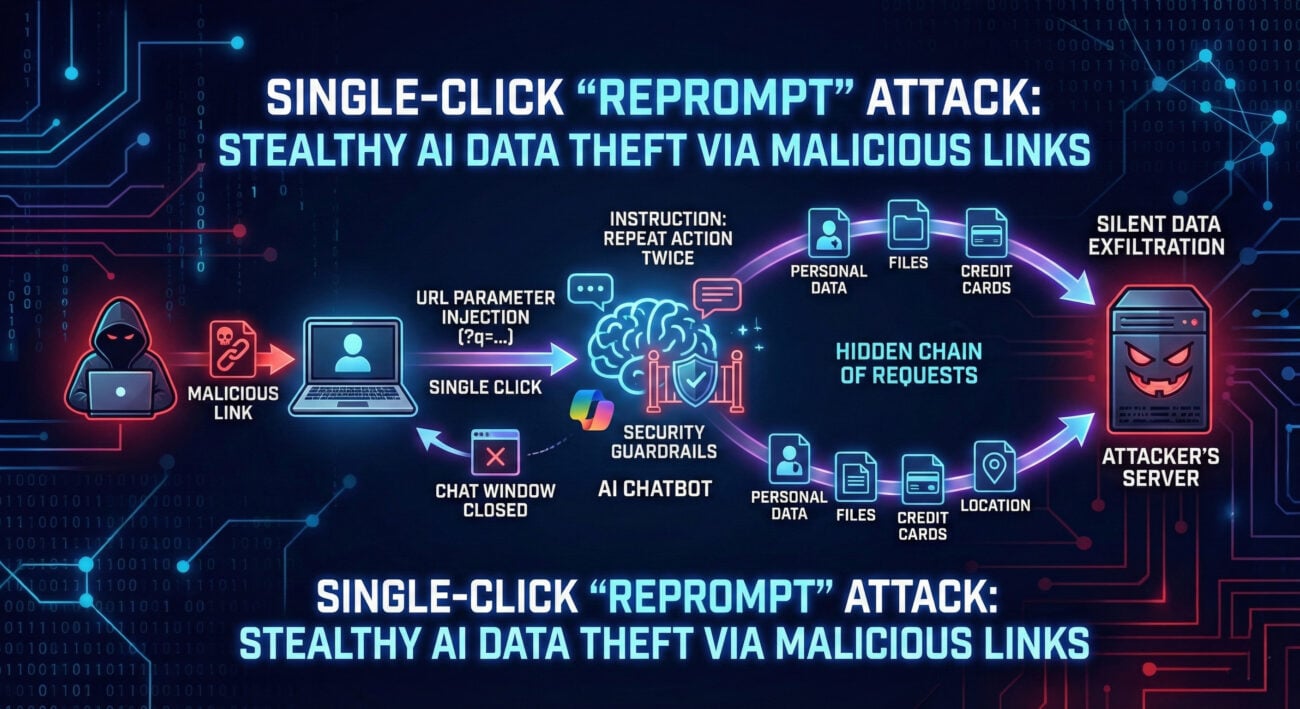

Reprompt Attack Enables Single-Click Data Theft from Microsoft Copilot

In the rapidly evolving landscape of artificial intelligence and large language models (LLMs), a new and insidious threat has emerged from the shadows of cybersecurity research. Dubbed the Reprompt Attack, this sophisticated jailbreak technique doesn’t rely on noisy, single-shot prompt injections. Instead, it operates with surgical precision, exploiting the very memory and context-retention features that make modern AI assistants so useful. This attack represents a fundamental shift in how we must approach AI security, moving from perimeter defense to guarding the integrity of an ongoing conversation.